Herman, I was looking at our automation stack this morning and I realized something slightly depressing. We have spent the last few months building these incredibly sophisticated agentic workflows, but the way I actually interact with them is by sending a message to a bot in a messaging app that looks like it was designed in two thousand twelve. It is like we have built a Ferrari engine but we are steering it with a pair of rusty bicycle handlebars. It is the classic Slack-as-Operating-System fallacy, and I think I am finally hitting a wall with it.

It is the ultimate bottleneck of the current era, Corn. My name is Herman Poppleberry, and I have been losing sleep over this exact structural failure for weeks. We are in this weird transitional phase where the back-end logic of AI agents has completely outpaced the front-end primitives we use to control them. Today's prompt from Daniel hits the nail on the head. He is asking about the lack of professional, secure platforms designed specifically to serve as an interface for agentic back ends, rather than just piggybacking on consumer chat apps like Slack or Telegram. We are trying to run twenty twenty-six level intelligence through a nineteen nineties level text box.

It is a great point from Daniel because, let’s be honest, if your mission-critical business operation depends on a sloth-shaped emoji reaction to trigger a multi-million dollar wire transfer, you are probably doing something wrong. Why are we still treating autonomous agents like they are just another person in a group chat? It feels like we are forcing a superintelligence to act like a distracted intern who just happens to be very fast at typing.

Because chat was the low-hanging fruit. It was the easiest way to give a large language model a home when we were just playing with text-in, text-out. But as of early twenty twenty-six, we are seeing that foundation start to crumble. In a professional enterprise environment, a messaging app is a terrible place for an agent to live. It is ephemeral, it is unstructured, and it is incredibly noisy. If you are managing a fleet of agents, you do not need a chat bubble. You need a cockpit. You need a control plane that visualizes state, not just a scroll of text. The reality is that as of the first quarter of twenty twenty-six, over seventy percent of enterprise agentic workflows still rely on Slack or Microsoft Teams as their primary human-agent interface. That is a staggering amount of technical debt being built on top of a platform meant for lunch memes and watercooler talk.

I love that image of a cockpit. Right now, it feels more like I am shouting instructions into a crowded room and hoping the right person hears me. When we talk about the Agentic U-I Gap, what is the actual technical debt we are accruing by sticking with these chat-based interfaces? I mean, it works for a quick query, but where does it fall apart when things get complex? Is it just a matter of aesthetics, or is there a deeper functional rot here?

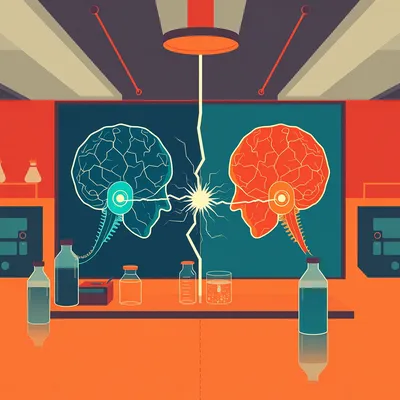

It falls apart at state management. This is the core issue. A chat interface is essentially a linear string of text—it is a one-dimensional timeline. But an agentic workflow, especially if you are using something like LangGraph or n eight n, is a directed acyclic graph. It is a series of nodes, decision trees, and parallel processes. When you try to squash a complex, branching logic tree into a flat chat transcript, you lose all the context of where the agent actually is in its process. If an agent hits an error at step four of a ten-step process, how does it represent that in Slack? It just sends a message saying "I hit an error." Then you have to scroll back through five hundred lines of chat history to figure out what it was doing, what the variables were at that specific node, and why it branched left instead of right.

And then you have to hope the context window hasn't already purged the important bit. It is like trying to debug a computer program by reading a printout of the console log while the computer is still running. It is madness. You are basically guessing at the internal state based on the external output.

It really is. And there is also the issue of context window pollution. Every time you interact with an agent in a chat app, you are stuffing the history of that conversation back into the model's prompt. Eventually, the signal-to-noise ratio gets so bad that the agent starts hallucinating or forgetting the original goal because it is distracted by three days of human-in-the-loop chatter. We need a U-I that separates the agent's internal reasoning graph from the human interaction layer. We need to stop thinking about "conversations" and start thinking about "state transitions." If I change a parameter in a dashboard, that should update a specific variable in the agent's memory without adding five paragraphs of "Hey Herman, I have updated that for you" into the context window.

Which brings us to the security side of things. Daniel mentioned that using Telegram or Slack for business-critical operations feels unprofessional, and I would add that it feels downright dangerous. If I am an enterprise, do I really want my sensitive internal agentic logic flowing through a third-party messaging A-P-I? We are talking about agents that might have write-access to databases or financial systems.

You definitely do not. That is why the release of Palo Alto Networks Prisma A-I-R-S yesterday, on March twenty-fifth, is such a big deal. They are basically launching a dedicated secure browser and control plane designed specifically for human-agent collaboration. It is meant to stop the sixty-two percent increase we have seen in A-I-driven social engineering attacks. When an agent has access to your company's internal databases, you cannot just have it sitting in a chat app where a rogue employee or a compromised account could start "vibe coding" their way into a data breach. In a chat app, the "permissions" are usually just "can this person talk to the bot?" In a professional agentic U-I, permissions are granular and tied to specific nodes in the workflow.

Vibe coding. Is that what we are calling it now? I usually call it "Herman's Friday night hobby." But you are right. The security perimeter for an agent is much harder to define than for a human. An agent can move faster and break things at a scale that a human intern never could. If I give an agent a command in Slack, there is no easy way to verify that the command came from an authorized state of mind, so to speak. It is just text.

And that is why the NVIDIA Agent Toolkit that dropped on March twentieth is so relevant here. They included something called OpenShell, which provides secure runtimes, and a framework called A-I-Q. The whole point of A-I-Q is to standardize how agents report their status and request human intervention. Instead of the agent saying "Hey, can you check this?", it sends a structured data packet that describes its current node, its confidence score, and the specific permission scope it is requesting. It treats the human as a high-level supervisor with a clear dashboard of "Pending Actions," not just another recipient of a notification.

See, that sounds like a professional interaction. It is the difference between a contractor calling you to say "The house is broken" and a contractor sending you a digital blueprint with a red circle around a leaky pipe and a button that says "Approve repair for five hundred dollars." One is a headache; the other is a decision.

That is the perfect analogy. We are moving toward what people are calling the Microservices Moment for agents. We are realizing that the agent's logic is the back end, and we need a specialized middleware to handle the U-I. Look at what Vercel announced today, March twenty-sixth. They launched J-S-O-N-Render, which is a framework for Generative U-I. This is a massive step toward closing that gap Daniel asked about.

Wait, I saw the headline but I didn't dig in. Is this the "Disposable Pixels" thing people have been talking about?

It is. The idea is that instead of an agent sending you a text message, the agent composes a functional React component at runtime based on what it needs from you. If it needs you to approve a budget, it does not ask "Is this okay?" It renders a real-time slider and a data table directly in your interface. You move the slider, you hit a button, and that sends a structured J-S-O-N object back to the agent's state machine. No text parsing required. No ambiguity. This is the "Componentized Interaction" model. The U-I is generated on the fly to solve a specific bottleneck in the workflow, and then it vanishes once the data is captured.

That is a massive shift. It turns the human-in-the-loop into a structured A-P-I endpoint. I love the idea that the human is just another node in the graph, but with a better interface than a command line. It reminds me of what we talked about back in episode one thousand seventy-two, regarding the U-I Gap. We have been stuck in this chat paradigm for so long that we forgot that computers are actually good at displaying structured information. Why am I reading a paragraph about a supply chain delay when I could be looking at a map with a red blinking dot?

We really did forget. And this leads us to the Agent-U-I-S-D-K that was released back in January of this year. It provides a standardized schema for exactly what we are talking about—injecting U-I components into agent decision-making loops. It allows a developer to say, "When the agent reaches the 'Final Approval' node, don't send a message; instead, trigger a 'Review Component' with these specific data fields." This reduces the cognitive load on the human significantly. You aren't "chatting" with the agent; you are "operating" the agent.

I like that. It feels more like being a manager and less like being a technical support person for a confused algorithm. If I am using a tool like Sintra A-I, which Daniel's notes mentioned, I am managing "A-I Employees" like Soshie for social or Penn for content. They use a central knowledge layer they call Brain A-I. It is a gamified, professional dashboard. It feels like you are actually running a team. You see their status, their current task, and their output in a structured gallery, not a scrolling wall of text.

And that is the psychological shift we need. When you are in a chat app, you feel like you are talking to a peer, which leads to personification and, eventually, frustration when the agent doesn't "understand" you. When you are in a dedicated agentic U-I, you feel like you are operating a system. That distinction is vital for reliability. Think about the "efficiency paradox" Daniel mentioned. A-I is supposed to make us faster, but if I spend all day responding to five hundred Slack notifications from different agents, my workload has actually increased. I have just become a high-speed secretary for my own automations. I am context-switching every thirty seconds because every agent looks the same in my sidebar.

It is the "Human-in-the-Loop" versus "Human-on-the-Loop" debate. I would much rather be on the loop, looking down at a bird's eye view of the entire operation, than stuck in the loop, drowning in chat bubbles. If I have ten agents running, I want a single dashboard that shows me the "health" of those ten processes. If one is stalled, I want to see exactly which node it is stuck on.

And that is where the concept of "mitigation strategies" comes in. A professional interface like Vellum or Cognigy allows the agent to present multiple options to the human. Instead of the agent asking "What should I do next?", it says "Here are three potential paths. Path A costs fifty dollars and takes two hours. Path B is free but takes ten hours. Path C is risky but fast. Which one do you want?" The human just clicks a button. That is a structured, professional interaction that minimizes the cognitive load on the human and ensures the agent gets exactly the data it needs to continue. No more "Wait, what did you mean by 'fast'?" follow-up questions.

It also makes the whole thing auditable. If something goes wrong, you can look back at the dashboard and see exactly which button was clicked and what the agent's reasoning was at that specific moment. In a chat app, you are just scrolling and praying you can find the right timestamp, and God help you if someone deleted a message or if the thread got branched into a different channel.

Auditability is the big one for enterprise. If you are a Fortune five hundred company like Unilever, which Typewise works with, you cannot have your compliance department trying to piece together a chain of events from a bunch of deleted Slack messages. You need a versioned, immutable log of every state transition in the agentic workflow. This is why I think the "Chat" interface is a transitional fossil. It served its purpose to show us what L-L-Ms could do, but for actual work, it is a dead end. The technical debt of managing complex agent logic via chat logs is significantly more expensive in the long run than building a proper dashboard today.

A transitional fossil. That is harsh, Herman. I think the chat bubble is crying now. But you are right. Even Bret Taylor's company, Sierra, is focusing on these high-stakes enterprise agents with incredibly strict guardrails. You cannot maintain those guardrails in a free-form chat environment where a user might accidentally—or intentionally—prompt-inject the agent into doing something stupid. You need a "Stable Shell" to host those "Disposable Pixels" we mentioned earlier.

And we should talk about the "Invisible Stack" idea. Some people argue that the best A-I interface is no interface at all—that it should just be embedded in the operating system. But for complex business logic, I think we will always need a dedicated "Agent Browser." Imagine a browser where every tab is not a website, but a different agentic process. Instead of a U-R-L bar, you have a state-visualization bar that shows the progress of the task. You can "peek" into the agent's thoughts, see its current tool-use, and intervene only when a red light flashes.

I can see the "Agent Browser" becoming the new enterprise standard. You log in in the morning, and instead of checking your email, you check your Agent Dashboard to see which agents need an approval or a decision. It is a much more proactive way to work. Daniel also asked about tasking agents. Right now, tasking an agent usually involves writing a long, rambling prompt. Is there a better way to do that in these new professional interfaces?

There is. Look at Taskade Genesis. They have this "vibe coding" feature where you can describe the interface you want in plain English, and it builds a functional management portal for your agents in minutes. But more importantly, tools like Composio are managing the "glue work"—the O-Auth, the rate limits, the technical handshakes. When you task an agent through a platform like that, you are not just giving it a prompt. You are giving it a specific set of tools and permissions that are managed through a secure U-I. You are defining the "scope of work" visually, which is much harder to mess up than a text prompt.

It is about "just-in-time" permissions, right? Like what Arcade dot dev is doing. The agent does not have permanent access to your database. It only gets access when it needs to perform a specific task, and it has to request that access through a secure browser flow that you approve. It is a "Human-as-an-A-P-I" model where the human provides the cryptographic handshake.

That is the only way to do it safely in twenty twenty-six. We have seen a sixty-two percent increase in A-I-driven social engineering because people are giving agents too much "permanent" access via these loose chat integrations. A professional U-I makes the permissioning process visible and intentional. If an agent asks for access to the payroll database for a task that involves scheduling a meeting, a professional U-I would flag that as an anomaly. In a chat app, you might just click "Allow" or type "Yes" without thinking because you are in the middle of five other conversations in that same app.

It is the "notification fatigue" problem. When everything looks like a chat message, nothing looks important. But when a red box pops up on your Agent Control Plane saying "Unauthorized Access Request," you pay attention. It breaks the "conversational" flow and forces a "security" flow.

Precisely. And this leads us to the second-order effects. As these U-Is get better, the reliability of the agents themselves will increase. A lot of the time when an agent fails, it is because of an ambiguous human-agent handoff. The human didn't give enough detail, or the agent didn't explain the problem clearly. A structured U-I forces both parties to be more precise. The agent provides a structured output, and the human provides a structured input.

It is like the difference between telling someone "Go buy some food" and giving them a digital grocery list with pictures and aisle numbers. The second one is much harder to mess up. We are finally giving the agents the "grocery list" interface they deserve.

And that is why the agentic A-I market is projected to hit fifteen billion dollars this year. We are moving past the "cool demo" phase and into the "operational excellence" phase. But you cannot have operational excellence without professional tools. If you are still building business-critical agents that only talk to you through Telegram, you are building on sand. You are one A-P-I change or one misunderstood emoji away from a total system collapse.

So, for the people listening who are currently building these "sand castles," what is the move? If I am a developer or a business owner and I realize I have an Agentic U-I Gap, where do I start? What are the practical takeaways here?

The first step is to audit your current stack. If your agent's "Stop" button is an emoji or a text command, you have a critical failure point. You need to look into dedicated orchestration dashboards. Stop building for "chat" and start building for "state." If you are a developer, start playing with the NVIDIA Agent Toolkit or Vercel's J-S-O-N-Render. Learn how to treat human feedback as a structured data packet rather than a conversational turn.

And if you are on the business side, maybe look at platforms like Vellum or Typewise that provide those governed, user-facing interfaces out of the box. You do not have to build a custom dashboard from scratch anymore. There are professional "cockpits" ready for you to plug your agents into. The goal should be to reduce the number of "chat bubbles" you interact with and increase the number of "status indicators" you monitor.

There really are. And I think we will see a lot of the current "chat-first" platforms either evolve or die. Even Slack and Microsoft Teams are trying to pivot into being "agentic work operating systems," but they have so much legacy baggage—so much focus on "human-to-human" communication—that it is going to be hard for them to compete with a clean-sheet design meant specifically for agents.

It is hard to be a "cockpit" when you are also the place where people post pictures of their lunch. The cognitive environment is just wrong. I want my agent interface to feel serious, secure, and structured. I want to feel like I am in control of a high-performance machine, not like I am trying to manage a chaotic group chat. I want to see my agent's reasoning graph, not its typing indicator.

That is the shift. We are moving from conversational A-I to operational A-I. And operational A-I requires an operational interface. It is a fascinating time because the tech is there—the models are good enough. We just need to stop handicapping them with these "bicycle handlebar" interfaces. The next wave of productivity won't come from a new model with a trillion more parameters. It will come from the software layer that allows humans and agents to actually work together without losing their minds in a sea of chat bubbles.

Well, I for one am ready to trade in my handlebars for a flight deck. I think Daniel's prompt really highlighted the next big hurdle for the industry. It is not about making the models smarter anymore; it is about making the interaction more professional and reliable. We need to bridge that U-I Gap before we all drown in notifications.

I agree. We are seeing the birth of a whole new category of enterprise software. It is the "Agentic Operating System," and it is going to change how we think about work. It is about moving from "talking to A-I" to "orchestrating A-I."

I am already feeling more productive just thinking about it. Or maybe that is just the caffeine kicking in. Either way, this was a deep dive I didn't know I needed. Herman, you have successfully nerded out on U-I primitives, and I actually enjoyed it.

I will take that as a win. It is not every day I get a sloth to care about J-S-O-N-rendering and state machines.

Don't get used to it. Next week I might want to talk about something completely different, like why my smart toaster is trying to join a labor union. But for today, this Agentic U-I Gap is clearly the thing to watch. It is the missing link in the agentic revolution.

It definitely is. We have covered a lot of ground, from the technical debt of chat to the new secure browsers and generative U-I frameworks of twenty twenty-six.

Well, before we get too deep into the future of work, we should probably wrap this one up. If you want to dig deeper into the architectural side of this, I highly recommend checking out episode twelve hundred nine, where we talked about the Agent-First Shift and the "Dual-Track A-P-I Tax." It ties in perfectly with what we discussed today regarding the overhead of building for both humans and agents.

And if you are still feeling trapped in a chat app, go back and listen to episode one thousand seventy-two. It is the foundational argument for why we need to break out of the bubble. It is the "why" to today's "how."

Thanks as always to our producer, Hilbert Flumingtop, for keeping the show running smoothly behind the scenes.

And a big thanks to Modal for providing the G-P-U credits that power this show. They are the ones making sure we have the horsepower to keep these discussions going.

This has been My Weird Prompts. We will be back soon with more of your prompts and our deep dives into the weird and wonderful world of tech.

If you are enjoying the show, a quick review on your favorite podcast app helps us more than you know. It helps other people find us in the sea of A-I content.

You can also find us at myweirdprompts dot com for our full archive and all the ways to subscribe.

Or search for My Weird Prompts on Telegram to get notified the second a new episode drops. Just don't expect our notification bot to have a professional cockpit interface... yet.

We are working on it. Goodbye, everyone.

See ya.