Latest Episode

Meet the Hosts

How It Works

#3437: Akko's Untapped Potential: History, Housing & Hurdles

Why does this 4,000-year-old UNESCO city get skipped by tourists and struggle economically despite being affordable?

#3436: Can Tiberias Escape Its Shabby Reputation?

A poor, Haredi-majority city on the Sea of Galilee bets big on tourism to reverse decades of decline.

#3435: Life on Israel’s Northern Edge

What’s it actually like living in Metula and Kiryat Shmoneh? A look at the north’s economy, security, and future.

#3434: Life Under 15 Seconds: Ashdod & Ashkelon

What it's really like to live in Israel's industrial south — cheaper rent, 15-second shelter warnings, and the country's best grilled meats.

#3433: The Same 12 Faces: Inside Israel's Tiny Acting Market

Why the same actors appear everywhere in Israeli TV—and what it means for working actors.

#3432: Do Rich Leaders Lose Touch? The Detachment Question

Can a leader who lives in luxury truly understand citizens struggling with housing costs and war fallout?

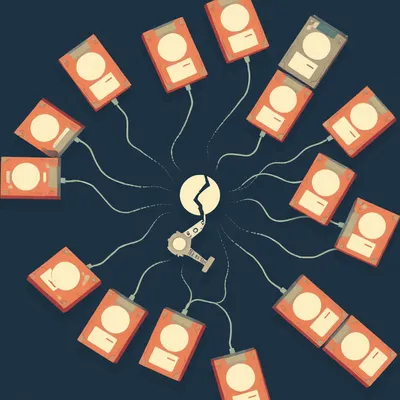

#3431: How YouTube Stores 500 Hours of Video Every Minute

YouTube's videos are shredded, replicated across global servers, and stored at a cost approaching zero. Here's how.

#3430: Urban Farming: Soil, Community, and Real Livelihoods

What does an urban farmer's life actually look like? Not the glossy renders—the real dirt and daily work.

#3429: IKEA's Hidden Waste: When Storage Bins Don't Fit

IKEA changes product dimensions every nine days. The environmental cost of those missing millimeters? Nobody's measuring it.

#3428: Logistics Careers That Survive AI

The jobs in logistics and warehousing that are actually growing — and the skills you need to get them.