Daniel ran an experiment last night — literally at eleven fifty-four UTC — and the thing that jumped out immediately wasn't the geopolitics. It was that five AI models, reasoning independently about the same crisis, didn't agree with each other. Not in minor ways. In ways that matter. He's calling it the Geopol Forecast Council, a lean spin-off of his Geopol-Forecaster project, and the question it's really asking is: when you line up a blind-parallel panel of models from genuinely different training lineages and point them all at the same fast-moving situation, what do you learn from where they converge — and what do you learn from where they don't?

By the way, today's episode is powered by Claude Sonnet four point six — which is, not incidentally, one of the five models that sat on this council. So there's a certain recursive quality to today's show that I find delightful.

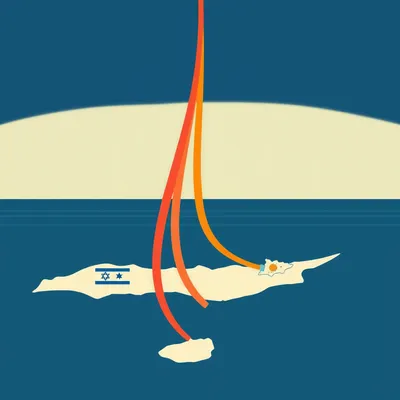

The friendly AI down the road wrote the script and also filed a forecast. The scenario Daniel chose was the Iran-Israel-US conflict, three time horizons: twenty-four hours, one week, one month. That's the test bed. What we're actually here to talk about is the methodology, what the council produced, and what the divergences reveal about how different models see the world differently — sometimes very differently.

Which is the part that surprised me most when I went through the outputs. You'd expect some variance on confidence scores, maybe some stylistic differences in how models frame uncertainty. What you don't necessarily expect is one model calling the Lebanon ceasefire effectively collapsed while another reads it as holding in name — and both of them producing internally coherent reasoning to support their position. That's not noise. That's signal.

That's exactly the design question worth unpacking. Because if you want divergence to be signal rather than groupthink, you have to build the system so that groupthink can't happen in the first place.

Right, and that's where the blind-parallel structure becomes really interesting from a methodology standpoint. So, Corn, how was this thing actually built?

The Geopol Forecast Council is essentially the leaner sibling of Daniel's Geopol-Forecaster, which simulates around forty actors across multiple timesteps and gets expensive quickly. This is the cheaper cousin — three pipeline stages, five council members, three time horizons. Designed to be fast and repeatable on a fast-moving situation.

The name "council" is doing real work there. It's not one model asked to forecast — it's a panel, deliberately constituted from different training lineages. Zhipu's GLM five point one, DeepSeek V three point two, Google's Gemini three Flash Preview, Claude Sonnet four point six, and Moonshot's Kimi K two point five. You're spanning Chinese labs, American labs, different data diets, different architectural choices. That's not accidental.

The scenario — Iran, Israel, the US, across twenty-four hours, one week, one month — was chosen precisely because it's the kind of situation where the ground shifts fast enough that you can actually stress-test a forecasting system. If everything is stable, convergence tells you nothing. You want a scenario where there are genuine open questions.

Which this absolutely was. The April twenty-second deadline alone creates a real binary inflection point cascading across three separate tracks simultaneously — Lebanon, the diplomatic channel, maritime. It's not a neat problem. It's exactly the kind of multi-actor, multi-domain tangle where you'd expect different models to weight things differently.

The scenario isn't really the point. It's the test bed. What Daniel is actually probing is whether a blind-parallel structure with genuine lineage diversity produces forecasts that are more epistemically honest than a single model asked the same question — and whether the disagreements, when they show up, are meaningful rather than arbitrary.

That framing matters. Because the alternative design — sequential deliberation, where models see each other's outputs — would just produce laundered consensus. The whole value of the blind structure is that you can't accidentally agree. So, to make this concrete, let’s break down the three-stage pipeline.

Yeah, let’s dig into that pipeline. Because "three stages" is doing a lot of heavy lifting — walk me through how it actually works.

Stage one is a grounding stack — RSS feeds from Times of Israel, Al Jazeera, BBC World, a Perplexity Sonar briefing, and Tavily news search, all running in parallel. That's your raw material. Stage two is where it gets interesting: the Council Head — which in this run was Claude Sonnet four point six — takes all of that and produces a single reconciled, timestamped SITREP. Key actors, stated positions, the load-bearing uncertainties. Every council member reasons from that identical frame.

That's a meaningful design choice. You're not letting each model do its own grounding independently.

Which could go either way. On one hand, you eliminate the possibility that one model grabbed a different headline and is now reasoning from a slightly different factual baseline. On the other hand, the SITREP itself reflects the Council Head's interpretive choices — what it decided was load-bearing, what it left out. There's a thumb on the scale before the council even convenes.

A very well-intentioned thumb, but still a thumb.

And then stage three — the council members each get that SITREP and produce exactly three concrete predictions per time horizon. Twenty-four hours, one week, one month. Nine predictions per model. Forty-five total across the panel. And here's the structural detail I find elegant: each prediction has six required fields. The prediction itself, supporting reasoning, a supporting historical precedent, a countervailing historical precedent, a single observable change factor, and a confidence score.

The countervailing precedent is the one that catches my attention. You're forcing each model to argue against itself.

Before it can file the prediction. You can't just assert "Iran will do X because historically Iran does X." You also have to surface the case where Iran didn't, or where a similar dynamic resolved differently. That's a real epistemic constraint. It's the kind of thing a good analyst does naturally and a bad one skips entirely.

GLM five point one — how did that play out in practice on the twenty-four hour horizon?

GLM filed a relatively low confidence score on IRGC vessel harassment — around zero point four zero. The reasoning was grounded, the historical precedent was solid, but the confidence was modest. And that's actually where the divergence with Claude becomes stark, because Claude came in at zero point seven eight on the same prediction. Same SITREP, same structured schema, same question. A thirty-eight point gap on how hot maritime escalation gets in the next day.

That's not a rounding difference. That's a genuine disagreement about what the situation implies.

The schema forced both of them to show their working. GLM's countervailing precedent emphasized prior instances where IRGC harassment plateaued rather than escalated. Claude's supporting precedent leaned on more recent harassment patterns. Neither is wrong to invoke the precedent they chose. They're weighting different historical analogies. That's lineage showing up as a real variable.

Which is exactly what the blind structure is designed to surface. If GLM had seen Claude's output first, does it anchor toward seventy-eight? You'd never know the real distribution.

The Report Author — also Claude Sonnet four point six in this run — then takes all forty-five predictions, clusters them, assigns consensus strength, and flags the sharp disagreements. That's the synthesis layer. Not averaging the confidence scores, but actually characterizing the epistemic landscape. Where did the panel agree? Where did it fracture? Those are different things and they warrant different treatment in the final report.

The Report Author being the same model as the Council Head is a detail worth sitting with. There's a question of whether that introduces a kind of editorial gravity — whether Claude's own forecast subtly shapes how it characterizes the disagreements it's summarizing.

I don't think Daniel has a clean answer to that yet. It's a real tension in the design. You want the synthesis layer to be as model-agnostic as possible, but someone has to do the clustering, and whoever does it brings priors. It's not unique to AI — any human analyst writing up a panel's findings has the same problem.

Just usually slower about it.

And a human analyst also doesn't have to worry about whether their clustering algorithm is subtly biased toward their own prior outputs.

Let's get into what the council actually produced. Where did five independently reasoning models, from different lineages, look at the same SITREP and say the same thing?

There's more convergence than you might expect, actually. All five agreed that the Lebanon ceasefire is nominal — kinetic incidents near-certain within twenty-four hours. All five agreed Iran would not reopen the Strait of Hormuz in that same window, but that IRGC harassment of vessels continues. All five agreed there's no comprehensive US-Iran framework deal coming in twenty-four hours, at best procedural progress. And all five flagged April twenty-second as a genuine binary inflection point cascading across Lebanon, diplomacy, and maritime simultaneously.

On the high-confidence, near-term stuff, the panel converges. Which makes a kind of intuitive sense — the closer the horizon, the more constrained the possibilities.

The convergence holds at one month too, on certain things. All five agreed a comprehensive nuclear deal within a month is structurally unlikely — the gap on enrichment is too wide. All five saw US-Israel alignment as visibly strained and deepening as Washington pursues a deal Tel Aviv opposes. And all five predicted some form of partial maritime de-escalation within a month driven by dual-blockade economic pressure — US blocking Iranian exports, Iran blocking Hormuz, both sides eventually needing an off-ramp.

That last one is interesting because it's not obvious. It's a second-order prediction. The first-order read is "blockade equals escalation." The second-order read is "mutual economic pain drives partial de-escalation." The fact that all five landed there independently is actually more meaningful than if one model had been told to look for it.

That's the value of the blind structure showing up in the convergence, not just the divergence. When five models reason independently and arrive at the same non-obvious conclusion, that's a stronger signal than any single model producing the same prediction. You haven't just gotten one analyst's read. You've gotten five independent derivations of the same result.

Now tell me where it fell apart.

The maritime track is where the sharpest single disagreement lives. On the one-month Hormuz outlook, Claude sits at zero point six one for sustained disruption. GLM sits at zero point three five — predicting mutual de-escalation and the strait reopening. That's a direct contradiction. Not a confidence gap. A directional disagreement on how the maritime situation resolves.

Both of them went through the same countervailing-precedent exercise to get there.

Which is what makes it informative rather than just noisy. GLM's historical anchor was the pattern of IRGC brinkmanship that stops short of full closure — the 2019 tanker incidents, the repeated harassment that never quite became a sustained blockade. Claude's anchor was the current economic pressure being qualitatively different from prior episodes. Same schema, different historical weighting, opposite directional predictions.

DeepSeek was the one I found most interesting from a framing standpoint. Walk me through that.

DeepSeek's divergence is more subtle but in some ways more revealing. Where Claude and Kimi both read the Lebanon ceasefire as effectively collapsed, DeepSeek read it as "holds in name" — a framing disagreement rather than a confidence disagreement. And on the April twenty-second inflection point, DeepSeek filed zero point five five on full ceasefire collapse. Claude was at zero point four eight, Kimi at zero point five zero. Nobody is above zero point five five. That's not consensus — that's genuine uncertainty spread across a narrow band, which is actually a more honest characterization of the situation than a high-confidence call would have been.

The calibration note on this is worth flagging. The confidence scores across the whole panel were deliberately modest — mostly between zero point three and zero point seven. And the report explicitly says that reflects the fluidity of the situation, not model uncertainty per se.

Which is a meaningful distinction. A well-calibrated forecaster on a uncertain multi-actor crisis should not be filing zero point nine. If you see a model producing very high confidence scores on a situation this fluid, that's a red flag about calibration, not a sign of superior insight.

Gemini was the outlier on nuclear escalation.

Only member to surface Iran moving to sixty percent enrichment — confidence zero point five five. Nobody else raised it. Which is interesting on its own, but what it really illustrates is the lineage diversity point in its sharpest form. Gemini noticed something the other four didn't flag. Whether that's because Gemini's training data weighted certain Iranian nuclear escalation precedents more heavily, or because its grounding of the SITREP led it to a different inference chain — you can't fully separate those variables from outside the model. But the fact that it's the only one to surface that prediction tells you something about what different lineages literally see in the same document.

Then there are the single-source predictions. One model raised it, nobody else did.

Kimi flagged a possible French UNIFIL drawdown. DeepSeek flagged a French-led emergency UN Security Council meeting. Gemini flagged a Pakistan-Turkey maritime corridor. GLM flagged an Iranian domestic security crackdown. Four predictions, four different models, zero overlap. Each one saw something the others missed entirely.

That's the lineage diversity argument in its most concrete form. It's not that the models disagreed on the same prediction. It's that they didn't even look at the same parts of the problem.

That's actually the strongest case for this kind of panel design. A single model, however capable, has a particular attentional profile — what it finds salient, what historical analogies it reaches for first, what knock-on effect it's primed to notice. A lineage-diverse panel doesn't just produce more variance. It produces broader coverage of the possibility space.

The question is whether the Report Author's clustering can actually capture that. If you've got four single-source predictions from four different models and the synthesis layer treats them as low-confidence outliers to be noted and set aside, you've potentially discarded the most interesting signal in the whole dataset.

That's the design tension Daniel hasn't fully resolved yet, I think. The Report Author flags sharp disagreements — that's the explicit design goal. But there's a difference between flagging a disagreement where four models went one way and one went another, versus flagging a prediction that only one model raised at all. The latter might be the more important category. It's not disagreement. It's a blind spot in the other four.

The Report Author being Claude means it's summarizing its own blind spots alongside everyone else's. Which is a strange epistemic position to be in.

It really is. Though to be fair, any synthesis layer has that problem. You're always asking someone to characterize their own uncertainty relative to others. The honest response is probably to treat single-source predictions as a distinct category in the output — not higher or lower confidence, but flagged as "one lineage saw this, others didn't, worth watching.

Which is actually a practical design recommendation rather than just a critique.

And I think that's where the lineage diversity analysis gets useful for anyone building one of these panels. It's not just "use diverse models." It's "build your synthesis layer so that single-source predictions get their own treatment, because they represent what a particular training lineage uniquely surfaces, and that's different from disagreement." But the real challenge is translating that into practice—what does it actually look like to implement?

So if you're building one of these panels, what does that mean concretely? You don't just throw five models at a question and call it a diverse panel. How do you ensure that synthesis layer actually captures the unique contributions of each model?

No, and I think that's the most important practical takeaway from this whole experiment. Lineage diversity is not the same as model diversity. You could pick five models that all trace back to similar training corpora, similar RLHF pipelines, similar architectural choices — and you'd get the appearance of a panel with the substance of one very confident analyst. The selection criterion has to be about training lineage, not just capability tier.

Which in practice means being deliberate about geography of origin as much as anything else. GLM and Kimi are both Chinese-lineage models, but they still surfaced different single-source predictions. DeepSeek is a different lineage again. Gemini is Google's stack. Claude is Anthropic's. The attentional profiles differ.

The six-field prediction schema is doing real work here too. If you just ask a model for its top predictions, you get whatever it finds most salient first. The schema forces it to surface a countervailing historical precedent — to argue against itself before filing the prediction. That's a meaningful constraint. It's not foolproof, but it at least requires the model to have encountered the counterargument before committing to a confidence score.

I'd also argue the confidence range is a practical output worth tracking over time. If a particular model in your panel is consistently filing scores above zero point eight on fast-moving geopolitical situations, that's a calibration problem you can identify and correct for — either by weighting that model's output differently or by building a recalibration step into the pipeline.

That's actually something the existing forecasting literature has a lot to say about. Philip Tetlock's superforecaster work found that calibration — knowing how confident to be — was as predictive of accuracy as raw analytical skill. The models that consistently overestimate their own certainty are going to drag the synthesis layer in the wrong direction even when their directional calls are good.

For anyone who wants to run something like this: pick models from different training lineages, use a structured prediction schema that includes forced self-contradiction, treat single-source predictions as their own output category rather than noise, and watch the confidence distributions over time as a calibration signal.

Keep the horizons short if you want the council to be useful. The design note on this is explicit — the council is cheaper than the full actor-simulation Geopol-Forecaster, but it's also clearly weaker on long-horizon or counterfactual reasoning. Twenty-four hours, one week, one month — that's the sweet spot. Push it to six months and you're asking the panel to reason about a world that may have structurally changed in ways none of the models can anticipate from the current SITREP.

Which is an honest limitation. Short-horizon forecasting on fast-moving situations is a real and useful thing. It doesn't have to be everything.

The other thing I'd flag for anyone building on this — the Council Head SITREP is a genuine leverage point. Getting the world-state anchoring right before the panel reasons is probably worth more effort than most pipeline designers will give it. Garbage in, garbage out applies here, but it's more subtle. It's not garbage — it's a slightly tilted frame. And a slightly tilted frame, applied to five independently reasoning models simultaneously, produces a systematically tilted output that looks like consensus.

The grounding stack — the RSS feeds, the Perplexity briefing, the Tavily search — that's not just housekeeping. That's load-bearing.

It really is. And running those in parallel rather than sequentially matters too. If you run them sequentially and let each source update your world model before the next one comes in, you've already introduced an ordering effect before the Council Head even starts writing. That raises another question: how much of the divergence we see is stable versus just noise?

That's the broader question I don't think the experiment answers, and I'm not sure it's supposed to. If you run this council on the same scenario at different timestamps, how much of the divergence is stable and how much is noise? Do GLM and Claude reliably disagree on maritime escalation, or did that zero point four zero versus zero point seven eight gap reflect something specific about what was in the news cycle at twenty-three fifty-four UTC on April eighteenth?

That's probably the most important open question for anyone trying to use this operationally. A forecasting panel you can only trust once is a research instrument. A panel whose divergence patterns are stable across runs — that's a tool. And right now we don't know which one this is.

Which is a interesting research agenda. Run the same scenario at six-hour intervals. See whether Gemini reliably surfaces nuclear escalation pathways that the others don't. See whether GLM reliably anchors toward de-escalation. If the attentional profiles are stable properties of the training lineage rather than artifacts of the specific grounding, that's a very different kind of signal.

It would let you build a meta-layer on top of the council — not just what the panel predicts, but what each lineage tends to see and not see, so you can weight the synthesis accordingly. That's where I think this methodology goes if it matures.

The geopolitical analysis use case is obvious, but I keep thinking about how this transfers. Any domain where you have a fast-moving situation, multiple actors with opaque intentions, and high stakes on getting the short-horizon call right. This is not a narrow tool.

It really isn't. And the fact that Daniel built it as a leaner version of the full actor-simulation pipeline matters — it suggests there's a cost-accessible version of this kind of structured AI forecasting that doesn't require spinning up forty simulated actors per run. That's a meaningful design contribution on its own.

Big thanks to Hilbert Flumingtop for producing this one. And to Modal for keeping our GPU pipeline running — if you're building anything that needs serverless compute, they're worth a look.

This has been My Weird Prompts. If you've got a minute to leave us a review, we read every one — find us on Spotify or at myweirdprompts.

We'll see you on the next one.