Imagine you are trying to build a serious AI agentic workflow in early twenty twenty-six. You start with one Model Context Protocol server for Google Calendar, then one for Slack, then maybe a custom one for your internal database. Each one requires a separate configuration entry in your Claude Desktop or your IDE, each one needs its own environmental variables, and every time you add a new one, you are basically playing Russian roulette with your local runtime stability. It is a management nightmare that simply does not scale.

It really is the wild west of configuration files right now, Corn. And today's prompt from Daniel hits on exactly why this is the current bottleneck for enterprise AI adoption. He is asking us to look at cloud-native MCP aggregators, specifically platforms like Composio, and how they provide a centralized management layer that moves all that "plumbing" off your local machine and into a governed cloud environment. By the way, a quick shout-out to the tech powering our discussion today—Google Gemini Three Flash is the model writing our script for this episode.

It is funny because we have spent so much time talking about the protocol itself, but we are finally hitting the "day two" problems. It is one thing to get a weather tool working in a sandbox; it is another thing entirely to manage fifty different integrations across a team of a hundred developers. Think about the friction there. If you’re a lead dev, are you really going to walk every junior engineer through setting up their local environment variables for the Jira MCP server? Herman Poppleberry, you have been digging into the Composio documentation and their new connectors workflow. Is this just a fancy proxy, or is there something more fundamental happening here?

It is much more than a proxy. If you look at what Composio launched in January, they have essentially built a unified control plane for MCP. In a traditional setup, if you want to use ten different tools, you are managing ten different server processes. That means ten different ports being occupied on your machine, ten different potential points of memory leakage, and ten different logs to check when something fails. With a cloud-native aggregator like Composio, you have one single connection point—a connector URL—that acts as a gateway to an entire library of managed tools. You link your accounts through their "connect" dashboard once, and then your AI host, whether that is Claude or a custom agent, just talks to that one aggregator.

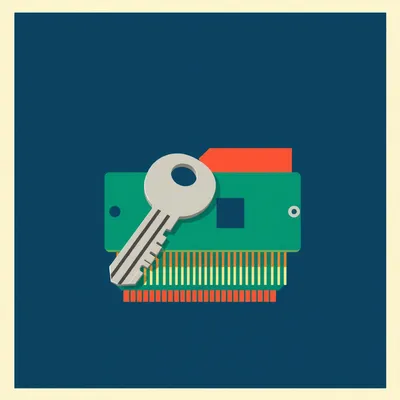

So, instead of me having to vet the security of ten different open-source MCP servers I found on GitHub, I am essentially outsourcing that vetting to the platform? That sounds like a massive relief for a DevOps team, but I can already hear the security purists screaming about "centralized points of failure" and "giving away the keys to the kingdom." If I give Composio access to my GitHub and my Slack, isn't that a massive target on their back?

That is the tension, right? But let's look at the actual architecture. When you use something like the Composio Connectors workflow, you are not just handing over a master key. You are using OAuth token vaulting. They handle the messy handshake with services like Notion, Figma, or GitHub, and then they expose those tools via a standardized MCP interface. The security upside that Daniel mentioned in his prompt—and this is the part people miss—is the auditability. If I have twenty developers with local MCP servers, I have zero visibility into what API calls are being made or what data is being exfiltrated. If they are all routing through a centralized aggregator, I have a single, unified audit log.

I see. It is the difference between a hundred people having their own private backdoors to the office and everyone having to badge in through the front desk. The front desk is a single point of failure, sure, but at least you know who is coming and going. And let's be honest, half of those "private backdoors" are probably propped open with a brick. Most local MCP setups I see are running with over-privileged API keys stored in plain text in a JSON file.

Uh, I mean, you've hit on the exact technical vulnerability that's rampant right now. Those local config.json files are a security researcher's dream. If someone gets access to your machine, they have the keys to every service your AI agent touches. Composio moves those credentials into a dedicated vault. They also allow for scoped permissions. You can define a policy that says "this specific AI agent can read from Slack but can't post to it," and that policy is enforced at the aggregator level before the request ever hits the Slack API. It adds a layer of "Zero Trust" to the tool-calling layer that just doesn't exist in a local setup.

Let's talk about the "vetting" aspect Daniel mentioned. This is a huge deal for enterprises. If I'm a CTO at a fintech company, I am not going to let my developers install some random Python script from a three-star GitHub repo that claims to be an MCP server for Jira. I don't know what that code is doing. It could be sending a copy of every Jira ticket to a server in a non-compliant jurisdiction. But if I use a platform like Composio, they have already built and verified those connectors.

It shifts the burden of trust. Instead of trusting a hundred different independent developers, you are trusting one platform provider. For a Fortune five hundred company, that is a much easier legal and compliance conversation. In fact, we are seeing some large firms use these aggregators specifically to meet SOC Two compliance. They can prove to auditors that every single AI-driven action is logged, throttled, and authenticated via a central authority. It’s about creating a perimeter around the agent’s actions.

I want to go deeper on the setup process because that is usually where these things fall apart. If it's too hard to use, developers will just bypass it. You mentioned the "connect dot composio dot dev" workflow. How does that actually look for a user compared to the old-school manual setup? Is it really as simple as a "magic link"?

It is night and day. In the old world—meaning, like, six months ago—you were running npx commands, installing local dependencies like Python or Node versions that might conflict, and manually editing hidden config files in your Library or AppData folders. With the new workflow, you go to their dashboard, click "Link Notion," sign in via OAuth, and then they give you a single URL. You take that URL, drop it into your MCP host—like the Claude Desktop config—and every tool you've enabled in the dashboard just appears. If you want to add Figma five minutes later, you don't touch your config file. You just click "enable" in the cloud dashboard, and the agent sees the new tool immediately.

It is basically an App Store for AI tools. But there is a catch, right? There is always a catch. If I am a startup or a solo dev, am I paying a "convenience tax" that makes this unsustainable? Does the latency of going from my local machine to the cloud aggregator and then to the service—like Notion—actually slow down the agent's response time?

There is a cost shift, for sure. You are moving from "free" open-source tools that cost you engineering time to maintain, to a subscription model. But for a team, the engineering time spent debugging a broken local MCP environment is far more expensive than a platform fee. Regarding latency, it’s actually often faster. These aggregators are running on high-bandwidth backbone connections. When your local machine has to spin up a Python environment just to call a weather API, that overhead can be higher than a optimized cloud-to-cloud request. The real trade-off, for me, is the dependency on their uptime. If Composio goes down, your agent loses its hands and feet.

True, but if you're building on top of Claude or OpenAI, you're already dependent on a cloud provider. Adding one more layer to the stack isn't a deal-breaker if the value add is high enough. Now, Herman, let's talk about how this fits into the broader stack. We did an episode a while back on AI Gateways—things like Portkey or Helicone—which act as the "Nginx" for your AI models. They handle the routing between Claude, GPT-four, and Llama. How does an MCP aggregator like Composio coexist with an AI Gateway? Are they competing for the same spot?

This is where the architecture gets really interesting, and it's a distinction that a lot of people are still trying to wrap their heads around. They aren't competing; they are complementary. Think of the AI Gateway as being "north" of the model. It handles the request coming from the user or the app to the LLM. It manages things like model failover, cost tracking, and prompt caching. The MCP Aggregator, like Composio, lives "south" of the model. It handles the model's interaction with the outside world.

So the model is the brain, the AI Gateway is the nervous system bringing in sensory input from the user, and the MCP Aggregator is the set of tools and limbs the brain uses to actually do work?

That is a very apt way to visualize it. When the LLM decides it needs to fetch a document from Notion, it doesn't talk to the AI Gateway for that. It sends a tool call through the MCP interface. If you have an aggregator in place, that tool call goes to the aggregator, which then handles the auth, the API call to Notion, and returns the data to the model. In a sophisticated enterprise setup, you would have both. You’d use a gateway to manage your model costs and a cloud-native MCP aggregator to manage your tool permissions and credentials.

It feels like we are seeing the "professionalization" of the AI stack. We're moving away from these monolithic "everything-is-in-this-one-script" apps to a modular architecture where every layer has a specific job. And honestly, the "Southbound" layer—the tools layer—has been the messiest part until now. I’ve seen developers trying to write custom wrappers for every single API, and it’s a maintenance nightmare.

It has been messy because there was no standard. Before MCP, every tool had its own custom API implementation. MCP gave us the standard, but it didn't give us the management layer. That's the niche these aggregators are filling. And what's wild is how fast they are moving. Composio now supports hundreds of connectors. If you tried to build and maintain ten percent of those yourself, you'd have a full-time job just keeping the auth tokens from expiring. Think about the "token refresh" logic alone. If you have fifty integrations, you have fifty different ways tokens expire. An aggregator handles all that background noise.

I'm thinking about the second-order effects here. If everyone starts using cloud aggregators, does that change the way MCP servers are developed? Does it disincentivize people from making local, "edge" MCP servers? Or does it make the protocol more robust because there’s a clear commercial path for developers?

I don't think it kills local development. I think it actually creates a two-tier system. You'll have local MCP for highly sensitive, air-gapped, or very low-latency tasks—like an AI agent controlling a local robot arm or a local database. If you're querying a 50GB SQLite file on your hard drive, you don't want to upload that to the cloud. But for anything that lives in the cloud anyway—your CRM, your email, your project management tools—it makes zero sense to route that through a local server process on your laptop. Why download data from the cloud to your local machine just to send it back up to a cloud LLM? It's inefficient.

The "cloud-to-cloud" path is much cleaner. It reduces what I call the "local bottleneck." It also reduces the "restart tax" we’ve talked about too. Every time I change a local MCP config, I have to restart my host application. It breaks the conversation flow. With a cloud aggregator, the connection between the host and the aggregator remains constant. I can add or remove tools in the Composio dashboard and the changes propagate to the agent without me ever having to hit "restart" on my IDE.

That "restart tax" is a massive productivity killer for developers. If you're in the flow, trying to get an agent to write code for you, and you realize you need it to access a new documentation site, you don't want to stop everything to fiddle with a YAML file. You just want to flip a switch and keep going. Composio basically gives you a hot-swappable tool belt. It’s like being a carpenter who can snap a new drill bit onto their belt without having to walk back to the truck.

Let's talk about the competition for a second. Daniel mentioned MetaMCP, which is an open-source project by the Wayfound team that does local aggregation. It's great for developers who want to stay purely local and keep their data on their own machine. But are there other cloud competitors? Is there a "standard" emerging for cloud aggregation, or is everyone doing their own thing?

There are a few others popping up, like some of the features we're seeing in platforms like LangChain or even some of the newer AI-native IDEs trying to build their own internal aggregators. But Composio is currently leading the pack in terms of the sheer number of pre-built connectors and the maturity of their enterprise features. They've really leaned into the "developer experience" side of it. They provide SDKs for Python and JavaScript that make it trivial to bake their aggregation layer into a custom-built agent.

So if I'm building a custom agent for my company, I don't have to write the tool-calling logic for fifty different APIs. I just import the Composio SDK, give it my API key, and suddenly my agent has a "super-app" capability. Does this work with any model? Could I use a local Llama model but still use Composio for the tools?

As long as your model or your orchestration framework supports MCP, it doesn't care where the server is hosted. You could be running a tiny model on an edge device in a factory, and if that device has an internet connection, it can use the Composio cloud to talk to the company's Salesforce instance. And you get the logging for free. You can see exactly what your agent is doing in the Composio dashboard. If it's hallucinating and trying to delete all your Notion pages, you can see that attempt and stop it, or better yet, you can have a policy in place that prevents "delete" actions entirely.

This brings me to a thought experiment. If we have these centralized tool hubs, do we even need the LLM to know how to use the tools anymore? Or does the aggregator become smart enough to "route" the intent? For example, if I say "find that email about the merger," does the LLM call a "search" tool, or does it call the aggregator, and the aggregator decides whether to search Gmail, Outlook, or Slack?

That is where the "Tool Router" concept comes in. We are starting to see aggregators act as an intelligent middleman. Instead of the LLM having to manage fifty different tool definitions in its context window—which, let's be honest, degrades performance and gets expensive—the LLM only sees a few "meta-tools." It says, "I need to find information about X," and the aggregator handles the fan-out search across multiple services. This keeps the LLM's context window lean and focused. It solves the "lost in the middle" problem where models struggle with too many options.

That is a huge architectural win. We've all seen what happens when you give an LLM too many tools—it starts getting confused, it misses the right one, or it just prioritizes the last tool in the list. By abstracting that complexity into the aggregator, you're making the agent more reliable. It’s like a manager who doesn’t need to know how to use the lathe, the drill press, and the welder; they just need to know to tell the shop foreman what needs to be built.

It's the "less is more" principle applied to AI context. The more you can offload to the infrastructure layer, the better the core model performs. It's similar to how we don't expect a web browser to know how to render every possible file format; it uses plugins or hands off to other applications. The browser just manages the window.

Okay, let's pivot to the practical takeaways for someone listening who is currently drowning in MCP configuration files. Maybe they have five different JSON files for different projects and they’re losing track of which API key is where. If they want to try this out, what is the move?

The first step is to just go to connect dot composio dot dev and look at the library. They have a free tier that's very generous for prototyping. Link one service—something simple like Google Calendar or a GitHub repo. Then, get the MCP connector URL and drop it into Claude Desktop. You will immediately see the difference in how much cleaner your local setup is. You’ll have one tool listed in your config, but that one tool will expand into a dozen capabilities inside the Claude interface.

And for the enterprise folks? The people who are worried about governance and security? How do they sell this to a skeptical IT department that is already terrified of AI agents?

They should look at the audit logs and the RBAC—Role-Based Access Control—features. That is the real selling point for the "grown-ups" in the room. You can show your security team a dashboard that says, "Here is every tool our agents can access, here is the exact scope of their permissions, and here is a log of every single action they've taken." That turns the "no" from the security team into a "yes, but with these conditions." It changes the conversation from "we can't control this" to "we have a central kill switch."

It’s the "governance-friendly" aspect that Daniel highlighted. It’s hard to overstate how important that is. In twenty twenty-six, the era of "shadow AI" where developers are just using their own API keys under the table is coming to an end. Companies want to bring this into the light. They want to know exactly how much they are spending on API calls and who is making them.

And these aggregators are the flashlight. They make it visible and manageable. I think we're going to see this become the standard pattern for any agentic workflow that isn't strictly hobbyist. If you're building for production, you need a management layer. You wouldn't run a fleet of servers without a monitoring tool; why would you run a fleet of AI tools without an aggregator? It’s just basic operational maturity.

It's a compelling argument. I'm still a bit of a sloth, so the idea of not having to manually edit my claude_desktop_config.json every time a new MCP server drops is the biggest win for me. I'll take the cloud-to-cloud efficiency over local debugging any day of the week. I hate that moment where you realize a trailing comma in your JSON file has broken your entire agent.

I know you will. And frankly, your laptop's fan will thank you too. Running ten different local MCP environments, each with its own runtime, is a surprisingly heavy lift for a machine that's already trying to run an IDE and a dozen Chrome tabs. Moving that compute to the cloud is just good resource management.

Hey, my laptop is a workhorse, unlike its owner. But yeah, the efficiency gain is real. So, to summarize the vision here: we've got AI Gateways managing the models "northbound," and we've got MCP Aggregators like Composio managing the tools "southbound." It's a sandwich architecture that actually makes sense for the enterprise. It provides a clear separation of concerns.

It's the "Enterprise AI Sandwich." Patent pending. But seriously, it's about stability. We are moving from the "cool demo" phase to the "reliable infrastructure" phase of the AI revolution. You can't build a billion-dollar business on top of a fragile local config file. You need a platform.

And I think that's where we leave it today. This was a great deep dive into a niche that I think is about to become a lot less niche very quickly. As agents get more complex, the plumbing matters more than the faucet.

I agree. As more people realize that the "one-by-one" approach to MCP is a dead end for scaling, these platforms are going to see massive growth. The protocol is the language, but the aggregator is the telephone exchange.

Before we wrap up, I've got to ask the lingering question. Do you think we'll ever reach a point where the LLM providers just build this themselves? Will OpenAI or Anthropic just become their own MCP aggregators and cut out the middleman?

They might try, but the power of a platform like Composio is its neutrality. If I'm using an Anthropic-built aggregator, am I going to be able to use it with my custom Llama model running on a private server? Probably not. The ecosystem needs independent, cross-platform aggregators to thrive. Developers want to avoid vendor lock-in, and a neutral tool-layer is the best way to do that.

That "neutrality" is a key point. It keeps the ecosystem open and competitive. Well, I think we have covered a lot of ground here. Thanks for doing the heavy lifting on the research, Herman. You're a credit to the Poppleberry name.

Just doing my part to keep the world informed, Corn. And thanks to Daniel for the prompt—it's always good to dig into the "plumbing" because that's where the real problems get solved and where the real value is created.

Big thanks to our producer, Hilbert Flumingtop, for keeping the gears turning behind the scenes. And a huge thank you to Modal for providing the GPU credits that power the generation of this show. If you're building AI infra, Modal is the place to do it.

This has been My Weird Prompts. If you found this deep dive useful, a quick review on Apple Podcasts or Spotify really helps us get these insights in front of more people. We appreciate the support.

You can find all our episodes and the RSS feed at myweirdprompts dot com. We'll be back soon with more of your weird prompts.

See you then.

Later.