Daniel sent us this one, and it's genuinely practical in a way I appreciate. He wants to dig into the full protocol for participating in virtual hackathons and finding your footing in AI circles. Not just "here's how to sign up" but the real texture of it: how you tell a worthwhile event from a glorified sponsor showcase, what the format actually looks like once you're inside, what you're building toward beyond a prize, and how you turn a Discord server full of strangers into something that resembles an actual professional community. There's a lot of ground to cover.

By the way, today's episode is powered by Claude Sonnet four point six, which feels appropriate given we're about to spend thirty-five minutes talking about AI communities.

The friendly AI down the road, writing our material. Very on-brand.

Right, so, virtual hackathons. I want to start with something that I think gets undersold, which is how dramatically the format has matured. Because there's this lingering perception that a virtual hackathon is a lesser thing, a consolation prize for when you can't get a room together. And that was maybe true in the early days, but the events being run now, especially in the AI space, are sophisticated. We're talking about purpose-built infrastructure for team formation, judging panels that include researchers from major labs, and async-friendly timelines that actually accommodate people with jobs and families. The in-person assumption has basically been dismantled.

Which also changes who shows up. If you need to fly somewhere, you've already filtered out a huge portion of the people who might actually be the most interesting to work with.

The geographic flattening is real. You can have someone in Nairobi paired with someone in Seoul and someone in, I don't know, Jerusalem, and that team composition produces something that a room full of people from the same city probably wouldn't. The diversity of context is a genuine asset, not just a logistical compromise.

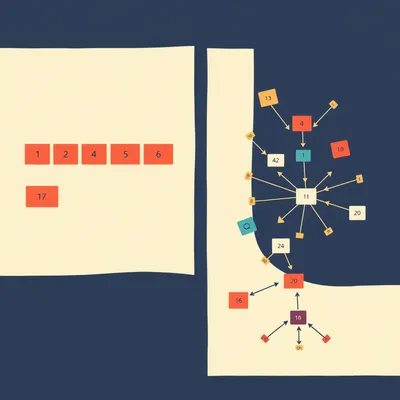

Before we get into the mechanics, let's talk about the landscape, because it's enormous. The AI hackathon space has exploded. I've seen figures suggesting something like a hundred and fifty or more AI-focused virtual events in any given quarter, which means the first real skill is just triage. How do you even know which ones are worth your time?

This is where I think a lot of people make their first mistake, which is treating hackathon announcements like job listings, where you apply to everything and hope something sticks. The signal-to-noise problem is real. A lot of events that call themselves hackathons are essentially marketing exercises. A company wants to generate buzz around a new API, they put up a ten-thousand-dollar prize pool, they call it a hackathon, and what they actually want is user-generated marketing content. That's not inherently evil, but it's a different thing than an event designed to push you technically and connect you with serious people.

How do you tell them apart? Because from the outside, the landing pages all look roughly the same.

A few reliable signals. The first is the judging criteria. If the rubric is heavily weighted toward things like "wow factor" and "presentation quality" relative to technical depth or novelty, that's a flag. Serious events will have explicit criteria around implementation quality, originality of the approach, and sometimes reproducibility. The second signal is who the judges actually are. If the panel is entirely composed of developer advocates from sponsor companies, that tells you something about what the event is optimizing for. If there are researchers, independent engineers with track records, or academics on the panel, that's a better sign.

What about the prize structure itself? Is there anything to read there?

Events with very large headline prizes and very few winners tend to attract a different crowd than events with distributed prize pools or tiered recognition. The winner-takes-all structure creates a more competitive, less collaborative atmosphere. Some of the best hackathons I've seen described have prize pools that reward multiple tracks, so there's recognition for the most technically ambitious project, the most practically useful one, the best documentation, the best solo submission. That structure signals the organizers actually care about the experience, not just the optics of a big check being handed over.

There's a community angle to this too, right? Because the Discord server that spins up around an event is essentially a preview of who's going to be in the room with you.

Most serious hackathons will have some kind of pre-event community channel, whether it's Discord or Slack or a forum. The quality of discourse in that space before the event starts is predictive. If it's mostly people posting "looking for team, I can do frontend," that's one thing. If people are already sharing papers, debating approaches, posting preliminary experiments, that's a different caliber of event. Join the Discord early, lurk for a few days, and you'll know pretty quickly whether this is a place you want to spend a weekend.

Which also means you can start building relationships before the gun goes off. That's not cheating, that's just being smart.

It's actually expected at the better events. Some explicitly structure pre-event socials or challenge reveals specifically to give people time to find collaborators before the clock starts. The AI for Good Hackathon that ran earlier this year had a whole week of pre-event sessions, including office hours with the challenge setters, so teams could actually scope their projects intelligently rather than just sprinting for forty-eight hours on something that turned out to be the wrong problem.

Tell me more about that one, because "AI for Good" is a phrase that can mean anything from impactful work to a company wanting to polish its ESG credentials.

The AI for Good Hackathon in twenty twenty-six was notable for a few reasons. The challenges were co-designed with NGOs and public health organizations, which gave them a specificity that generic "solve a social problem" prompts usually lack. Participants weren't just building something and hoping it was useful, they were building to a defined need that an actual organization had articulated. The judging included representatives from those partner organizations, which meant technical novelty wasn't sufficient, you also had to demonstrate that what you built connected to the actual operational reality of the people who'd theoretically use it. That's a harder bar, and it attracted people who wanted to clear it.

That's a meaningful distinction. The challenge setter is also the end user, which completely changes what "good" means for a submission.

The submissions that came out of it reflect that. Some of the tools built at that event got picked up for actual pilots, which is rare. Most hackathon projects die in the repository where they were born. When something makes the jump to real deployment, even in a limited pilot, that's a signal the event was structured to produce real outcomes rather than impressive demos.

Okay, so let's say you've vetted the event, you've joined the Discord, you've read the judging criteria, and you've decided this is worth your weekend. What does the inside of a virtual hackathon actually look like? Because I think there's a gap between what people imagine and what they actually encounter.

The format varies more than people expect. The classic model is a fixed duration, usually forty-eight to seventy-two hours, with a defined start time, a submission deadline, and a synchronous judging or demo period at the end. But that's not universal. A lot of AI-focused events now run on longer timelines, sometimes a week or two, specifically because the kind of work involved, training models, running evaluations, iterating on prompts, doesn't compress well into a weekend sprint. You need compute time, you need sleep, you need the ability to come back to something after you've thought about it.

The forty-eight-hour sprint model made more sense when the deliverable was a web app. You could scaffold something, throw a UI on it, demo it. That's not really how serious AI work happens.

And the better events have recognized that. Some have moved to what they call async-first formats, where the core work happens over a longer window and the synchronous elements are reserved for team standups, optional mentorship sessions, and the final presentation. That's actually a more honest representation of how distributed technical teams work, which is an underrated benefit of the format. You're not just building a project, you're practicing the collaboration patterns you'd use in a real distributed team.

How does that actually work in practice? Because "find your team" is easy to say and hard to do.

Most events have some kind of team formation mechanism, and they range from extremely rudimentary to surprisingly well-designed. At the basic end, you get a channel in Discord where people post their skills and what they're looking for. That works, but it's chaotic. The better events use structured intake forms where you specify your skills, your availability, your interests, and whether you're looking to join a team or form one, and then they do some kind of matching, sometimes algorithmically, sometimes manually. I've seen events that run dedicated team formation sessions, basically speed networking with a specific goal, which is awkward but effective.

The awkwardness is load-bearing. You need some friction to force actual conversation rather than just profile browsing.

The events where team formation is too frictionless tend to produce teams of people who never really talked to each other, they just clicked "join" on someone's post. Those teams struggle because there's no established communication pattern, no sense of who defers to whom on what, no shared vocabulary. The teams that form through actual conversation, even a thirty-minute video call before the event, perform noticeably better. And they're also more likely to stay in touch after.

What's the realistic time commitment for someone doing this properly? Not the minimum viable participation, but doing it in a way that actually produces something you'd want to show someone.

Honestly, more than most people budget for. For a forty-eight-hour event, you're probably looking at thirty to forty hours of actual engagement if you want to produce a submission you're proud of. That includes the pre-event prep, the team formation, the actual build time, the documentation, and the demo preparation. If it's a longer format event, the hours are more distributed, but the total is similar. People who go in expecting to "dip in for a few hours over the weekend" either don't submit or submit something they're embarrassed by.

What are you actually expected to hand over at the end?

This varies by event, but the standard package is a working demo or prototype, a GitHub repository with the code, a short written description of what you built and why, and some kind of presentation, either a recorded video or a live pitch. The documentation piece is consistently underweighted by participants and overweighted by judges. I've seen technically impressive projects lose to less sophisticated ones because the documentation was clear, the problem statement was well-articulated, and the judges could actually understand what they were looking at.

Which is its own kind of skill. The ability to explain what you built to someone who wasn't in the room while you were building it.

It's a skill that transfers directly to real work. A model that works but can't be explained is a liability. The hackathon documentation requirement is basically a forcing function for the kind of communication that makes technical work actually useful in an organizational context.

Let's talk about the benefits beyond the prize, because I think the prize is actually the least interesting thing about a well-run hackathon. The expected value of winning any given prize is low. The expected value of the other stuff is much higher.

The portfolio piece alone is worth the entry cost, and most hackathons are free to enter. A project built under real constraints, with a defined problem, a collaborating team, and a submission deadline, is a categorically different kind of portfolio artifact than a personal project you tinkered with over six months. It's verifiable, it has a timestamp, it has a problem statement that wasn't invented by you, and it demonstrates you can ship under pressure. Hiring managers who understand the hackathon context read those submissions differently.

There's also the skills piece, which is more granular than people give it credit for. You're not just learning a technology, you're learning how to make decisions under time pressure, which is a different cognitive mode than leisurely exploration.

The constraint-driven learning effect is real. When you have forty-eight hours, you make choices about what to implement and what to stub out in a way that forces genuine prioritization. You can't gold-plate anything. That's actually a very transferable skill, knowing what the minimum viable technical foundation is for a given problem. A lot of people with strong theoretical backgrounds struggle with this because they've never had to ship something with an actual deadline.

Then there's the collaborator pipeline, which I think is the most underappreciated benefit. You spend a weekend working intensely with someone, you know pretty quickly whether you'd want to work with them again.

The signal quality is high. You see how someone handles ambiguity, how they communicate when things aren't working, whether they disappear when the going gets hard or dig in. That's a much richer data set than you'd get from a coffee chat or a LinkedIn connection. The people who come out of hackathons with two or three genuine collaborators they'd vouch for, that's a career asset that compounds over time.

There's a survey figure I want to bring in here, because it's striking. Something like seventy percent of AI hackathon participants report gaining valuable industry connections from the experience. Which is high, but I'd also push back slightly on what "valuable connection" means in that context.

Right, there's a difference between "I added someone on LinkedIn" and "I have a person I can call when I'm stuck on a problem." The survey number is probably capturing both, which inflates it somewhat. But even if you discount heavily, the underlying phenomenon is real. The shared intense experience creates a social bond that cold outreach never does.

There's a hiring dimension too, which is less obvious. Companies that sponsor hackathons are often there specifically to scout. It's a much lower-cost way to evaluate technical talent than a formal interview process, and the evaluation is based on actual work product rather than performance under interview conditions.

Some companies are very explicit about this. They have recruiters in the Discord, they're watching the submissions, and the path from "top hackathon submission" to "recruiter DM" is shorter than people realize. I've seen accounts from participants who got interviews at companies they'd never thought to apply to because someone from that company was paying attention to what they built.

Which is a completely different dynamic than the traditional job application funnel. You're being evaluated on something you actually made, in a context that approximates real work conditions, rather than on your ability to solve contrived whiteboard problems.

The evaluation is mutual. You're also evaluating the company. The way a company shows up in a hackathon, how their developer advocates engage in the Discord, whether their mentors are actually helpful or just there to demo their product, that tells you something real about the culture. I've heard people say they decided not to pursue opportunities at companies specifically because of how those companies behaved in hackathon communities.

That's a healthy inversion. The candidate is doing due diligence on the employer through their behavior in a community context.

Which is only possible because the community exists. Which brings us to the community piece itself, because that's really the long-game value of the hackathon ecosystem. The event is the occasion, but the community is the asset.

Building that community from digital interactions is its own skill set. The Discord intro that goes nowhere versus the Discord intro that turns into a real working relationship, what distinguishes them?

The follow-through, almost entirely. Most people are fine at the initial introduction. They post something coherent, they respond to a few messages, they have a reasonable conversation during the event. Where almost everyone falls down is the post-event window. The event ends, the adrenaline drops, everyone goes back to their lives, and those connections evaporate unless someone actively maintains them. The default is silence, and silence is sticky.

The protocol for not letting that happen?

The first move is simple and it needs to happen fast. Within twenty-four to forty-eight hours of the event ending, you send a specific message to the people you actually connected with. Not "great working with you," which is a dead end, but something that references a specific moment or conversation from the event and opens a door. "I've been thinking about that approach you proposed for the embedding layer, I found a paper that's relevant, want to talk through it?" That's a message that has somewhere to go.

Specificity is the whole thing. Generic warmth doesn't create obligation or interest. A specific reference to something they said creates both.

It demonstrates you were paying attention, which is its own signal. The transition from async to synchronous is the next threshold, and it's where a lot of people stall. Moving from text in a Discord channel to a video call feels like a big ask, but it doesn't have to be framed that way. "I'm going to be looking at this problem for another hour, want to hop on a call?" is lower stakes than "let's schedule a meeting." The informality of the invitation matches the informality of the context.

The DM etiquette piece matters here too, because there's a version of post-hackathon outreach that tips into being annoying, and people are often not sure where the line is.

The principle I'd offer is that every message you send should contain something of value to the recipient, not just something you want from them. If you're asking for a favor, an introduction, a referral, without having offered anything first, you're withdrawing from an account that has no deposits. The people who build real networks from hackathons are the ones who share things, resources, opportunities, observations, without a transactional expectation. The reciprocity happens, but it's not engineered, it's emergent.

Which is actually how the best professional relationships work in any context. The hackathon just accelerates the timeline because the initial shared experience is so compressed and intense.

There's also a broader community infrastructure that people underutilize. The hackathon is one entry point, but it's not the only one. com still has a robust presence in the AI space, particularly for local groups that want to maintain a real-world component even when most of their activity is online. The quality varies enormously, but the groups that have been running for two or more years and have consistent attendance, not just spikes around trending topics, those are the ones worth investing in.

How do you identify the thriving ones from the ones that are basically a Zoom call with three people who are also in the organizer's LinkedIn network?

Consistency is the primary signal. A group that has met monthly for two years has demonstrated something about its value to its members. Look at the event history, not just the upcoming events. If there's a pattern of events being canceled or rescheduled, that tells you the organizer is struggling to sustain it. Look at the attendee count trends over time, not just the absolute number. A group that's grown from thirty to eighty over eighteen months is a different thing than a group that's been stuck at fifteen for three years.

The content of the events matters. There's a difference between a group that does talks and panels versus one that does actual working sessions or project showcases.

The working session format is underrated. It's harder to run and harder to fill, but the connections that form from sitting next to someone and actually building something together, even for two hours, are more durable than the ones that form from listening to the same talk. Some of the best local AI groups I've heard about have structured their meetups as show-and-tell sessions where members bring something they're working on and get real-time feedback. That format creates obligation, you have to actually make something, and it creates a shared investment in each other's work.

It's worth noting that you don't have to be a strong coder to get value from either of these contexts. I think there's a real misconception that hackathons are only for engineers, and that you're useless in these communities if you can't write production code.

This is one of the things the coverage consistently gets wrong. The limiting factor at most hackathons is not coding ability, it's problem framing, domain expertise, design thinking, communication, and project management. A team of four engineers with no one who can write clearly or think about user needs is a worse team than one with three engineers and someone who can do those things well. The events that attract diverse skill sets produce better work, and the organizers of serious events know this.

If you're coming in as a product person, a domain expert, a writer, a designer, what's the actual contribution?

Problem scoping is the biggest one. The teams that spend the first few hours clearly defining what they're building and why, rather than just starting to code, tend to produce better submissions. If you're the person who can facilitate that conversation, who can push back when the scope is too broad or the problem is ill-defined, that's an enormous contribution. Documentation is another one. The submission that wins on documentation quality is often the one where someone took ownership of that piece early and kept it updated throughout the event rather than scrambling to write it in the last two hours.

The graceful exit, because not every hackathon you join will be the right fit, and knowing how to leave without burning bridges is its own skill.

The key is early communication. If you realize by hour six that the team isn't working, or the challenge isn't what you expected, or you simply don't have the bandwidth you thought you did, say something directly and early. "I'm realizing I'm not the right fit for this team's direction, I'm going to step back so you can find someone who fills this role better" is a completely acceptable thing to say. What damages relationships is disappearing, going silent, leaving people to wonder if you're still in or not. The hackathon community is smaller than it looks, and the people who ghost their teams get remembered.

The reputation economy in these communities is real and it's long. You will see the same people at multiple events over years.

That's actually one of the most important things to internalize about the hackathon ecosystem. It's not a series of isolated events, it's a recurring community where your behavior compounds. The person who's consistently generous, who shares useful resources, who gives genuine feedback on other teams' demos, who shows up and does the work, that person accumulates a reputation that opens doors that are invisible to someone who treats each event as a standalone transaction.

Which is really the meta-point. The hackathon is the occasion, but you're investing in a community that exists between events and long after any specific project is forgotten.

The AI community specifically is at a moment where that investment has unusual leverage. The field is moving fast enough that the people you meet at a hackathon today might be running a significant project or company in two years. Being a known, trusted presence in those networks before that happens is valuable. The early community building in any fast-moving technical field tends to create relationships that persist and matter even as the landscape shifts dramatically around them.

There's something almost paradoxical about it. The faster the technology moves, the more valuable the human relationships become, because the technology alone can't give you the context and trust you need to navigate it.

The signal problem is too severe. When everything is moving fast, the question of who to trust, whose judgment to rely on, whose work is actually solid, those questions become harder to answer from first principles and easier to answer through networks of people who have actually worked together. The hackathon is one of the best mechanisms we have for building that kind of trust at scale, because it's based on actual demonstrated work rather than credentials or self-presentation.

Alright, we've laid a lot of ground here. When it comes to vetting events and navigating that first experience, one thing that’s changed dramatically is the scale and infrastructure of AI-focused events. That’s worth unpacking, because it shapes how listeners approach them today.

The numbers give you a sense of the scale we're operating at—something in the range of a hundred and fifty AI-focused events per quarter globally. And the infrastructure underneath them has matured. Dedicated judging platforms, async-friendly timelines built around distributed teams, multi-stage formats that give participants room to iterate rather than just sprint and collapse. That's a different thing than what hackathons looked like five years ago.

The format evolution matters because it changed who can actually participate. When it was a seventy-two hour continuous grind, you were selecting for a very specific kind of person with a very specific kind of availability.

You were selecting against a lot of people who have valuable things to contribute. The async model opened it up. You get a data scientist in Berlin and a domain expert in Nairobi and a product person in Seoul on the same team, and the event structure actually accommodates that rather than fighting it.

What makes the AI space specifically interesting, as opposed to hackathons in other domains, is how quickly the tools themselves are changing. You can show up to an AI hackathon with a capability that didn't exist six months ago and build something novel.

The ceiling on what's achievable in a weekend keeps moving, which means the events keep producing things that surprise even the organizers. That's unusual. Most hackathon domains have fairly predictable output. The AI space doesn't, and that's part of what keeps serious practitioners engaged. You're not just competing, you're also learning what's now possible.

The community that forms around that shared discovery is different in character from a lot of professional networks. There's a genuine collaborative energy because everyone is slightly bewildered by the same things at the same time.

Which is a rare and useful condition for building real relationships. Shared confusion is underrated as a bonding mechanism. Shared expertise creates hierarchy. Shared confusion creates peers.

Let's get into the actual vetting process, because the scale we're describing means there's a real signal-to-noise problem. A hundred and fifty events per quarter globally, thirty percent of all virtual hackathons being AI-focused, that's a lot of events competing for your attention and your weekend.

The quality variance is enormous. The most reliable early signal is the challenge design. A well-run hackathon publishes its challenge tracks in advance with enough specificity that you can actually evaluate whether your skills are relevant. Vague prompts like "build something with AI" are a red flag. Not because ambiguity is inherently bad, but because it usually means the organizers haven't done the work of thinking carefully about what they want participants to produce.

The AI for Good Hackathon this year is a useful contrast. They co-designed their challenge tracks with the NGOs who were going to use the outputs. So participants weren't building into a void. They knew from day one that a specific organization with a specific operational problem was going to evaluate and potentially deploy what they built.

That structure changes everything about the quality of submissions. When there's a real stakeholder on the other end, teams self-select for seriousness and the work reflects it. Two of the projects from that event moved into actual pilots within three months of the hackathon ending. That's not typical, but it's what the best-organized events are capable of producing.

How do you distinguish that kind of event from one that's essentially a marketing exercise dressed up as a hackathon? Because some of them are just lead generation for a company's developer platform.

The prize structure is one indicator. Sponsor-driven events tend to concentrate prizes in one or two top slots, because the goal is a headline number that looks impressive in a press release. Events that are actually invested in community and skill development tend to distribute rewards more broadly, recognition tracks, category prizes, mentorship opportunities, introductions to hiring teams. The money matters less than what the prize structure reveals about what the organizers value.

The Discord pre-event activity is another one. If the server is active weeks before the event starts, if organizers are posting resources, if participants are already introducing themselves and forming early connections, that's a community that has momentum. If you join and it's silent except for a pinned announcement, that silence is information.

Once you've identified an event worth joining, the format question is what shapes the actual experience. Most serious AI hackathons now run on a multi-stage model. There's a registration and team formation period, then a challenge release, then the build window, which is typically forty-eight to seventy-two hours but structured to accommodate async participation, and then a submission and judging phase. The judging itself is often multi-round, with a panel review followed by finalist demos.

Team formation is where a lot of first-timers underestimate the time investment. It's not just finding warm bodies to fill roles. It's finding people whose working style, availability, and skill profile actually complement yours across a compressed timeline.

The events that handle this well create structured team formation channels with role tags. You can post that you're a machine learning engineer looking for a product partner and a designer, and people can find you. The events that leave it entirely unstructured tend to produce teams that are either redundant in skills or have critical gaps they discover at hour thirty when it's too late to fix.

The time commitment is real. Even with async formats, a serious submission requires something in the range of twenty to thirty focused hours over the event window. That's not a casual weekend activity.

Which is why the benefits beyond prizes matter so much. If you go in only optimizing for winning, and you don't win, the experience feels like a loss. If you go in optimizing for the portfolio piece, the collaborators you meet, and what you learn about your own working style under pressure, those returns are much more reliable. A strong submission that doesn't place is still a concrete artifact you can point to, with a GitHub repository, a demo, a write-up that demonstrates how you think about problems.

The hiring angle is underplayed. Recruiters and hiring managers from serious AI companies pay attention to hackathon results, not just the winners. Someone who built something technically coherent and documented it well is demonstrating things that a resume can't.

The collaborator pipeline is real. The person you build with over a weekend knows your work ethic, your communication style, and your technical judgment in a way that someone who only knows you through a LinkedIn profile simply doesn't. That's a different quality of professional relationship, and it's one that tends to generate referrals and project invitations long after the event is over.

That referral dynamic is something people really underestimate. Because the currency in these communities isn't credentials, it's demonstrated judgment. And you can't fake that over a weekend of actual work.

The follow-through is where most people leave value on the table, though. You have this intense shared experience, you've built something together or had a interesting conversation in a Discord thread at two in the morning, and then the event ends and everyone disperses and that connection just... Because nobody did the thirty seconds of work required to preserve it.

What does good follow-through actually look like? Because "connect on LinkedIn" is the professional equivalent of saying you'll call someone and then never doing it.

The timing matters more than the medium. If you wait more than forty-eight hours after the event closes, the connection has already cooled. The message that lands best is specific to something that actually happened. Not "great meeting you at the hackathon" but "I've been thinking about the approach your team took to the data pipeline problem, I had a follow-up thought." That's a message that proves you were paying attention and gives the other person something to respond to.

You're restarting the conversation rather than just logging the contact.

The transition from async to synchronous matters a lot for the connections that are actually going to develop into something. A Discord exchange can go on for weeks without deepening. One thirty-minute video call does more for the relationship than a month of text threads, because you're actually reading each other in real time. The ask doesn't need to be elaborate. "Want to do a quick call to debrief on the event and what we each learned?" Most people will say yes to that.

DM etiquette is a real thing in these communities and I don't think it gets discussed enough. Because the wrong approach in a Discord DM can close a door that would otherwise have been open.

The baseline rule is don't lead with an ask. If the first message someone receives from you is a request for something, a referral, a code review, a job lead, you've established the relationship as transactional before it's had a chance to be anything else. The opener should be contribution or genuine curiosity. Share something relevant to a conversation they were part of. Ask a question you actually want answered.

Read the room on frequency. One unreturned message is information. Two unreturned messages is a pattern. Three is harassment.

That's a harder rule than it sounds in practice, because the people you most want to connect with are often the most responsive in public channels and the least responsive to direct outreach, because they're fielding a lot of it. The way around that is to be consistently visible and useful in the public channels first, so that when you do reach out directly it's not coming from nowhere.

Which brings us to the broader community infrastructure beyond the hackathons themselves, because the events are entry points but they're not the whole ecosystem.

Meetup dot com still works, which surprises people. Local AI meetups in most mid-sized cities have gotten substantive. These aren't just networking nights with name tags, the better ones have paper discussions, project showcases, working sessions. The signal is whether the organizer is a practitioner or an event coordinator. Practitioner-run meetups tend to attract other practitioners. Event-coordinator-run meetups tend to attract people looking for jobs and vendors looking for leads.

How do you tell the difference before you show up?

Look at the talk history. If the recent events feature people presenting actual work they've done, with technical specificity, that's a practitioner community. If the lineup is mostly company representatives talking about their product's capabilities, that's a marketing channel with a meetup wrapper.

The online communities that have staying power tend to have a similar characteristic. There's actual work being shared, not just commentary on work being done elsewhere.

The AI communities that I've seen develop real depth have a few things in common. They have a clear scope that's specific enough to attract people with shared interests but broad enough to sustain conversation. They have norms around quality, explicit or implicit, that filter out low-effort participation. And they have some mechanism for people to demonstrate work, whether that's a showcase channel or regular project updates or a structured critique process.

The communities that collapse tend to be either too broad, so the signal-to-noise ratio degrades as they grow, or too narrow, so they exhaust their subject matter within a few months.

The practical question for someone trying to find their community is to look for the one where the people who are slightly ahead of you are still actively participating. If the senior practitioners have already left, the community is past its useful phase for learning. If they're still in the channels, still answering questions, still sharing their current work, that's a living ecosystem.

One thing we haven't addressed is the person who wants to participate but doesn't have strong coding skills. Because I think there's a real misconception that hackathons are only for engineers.

It's one of the more persistent myths about the format. The teams that win are almost never pure engineering teams. They're teams that can build something, explain why it matters, demonstrate it compellingly, and connect it to a real problem. Those last three are not engineering skills. They're research, communication, and domain expertise skills. A team that's all engineers building in a vacuum loses to a team with a strong domain expert who kept them honest about whether the thing they built actually solves anything.

The practical implication being that if your background is in, say, public health or education or logistics, you're not a passenger in an AI hackathon. You're potentially the person who makes the team's project coherent.

The roles that are underrepresented in most hackathon teams are product thinking, user research, clear writing, and visual communication. If you can do any of those things well, you will not struggle to find a team that wants you.

The graceful exit question, because not every hackathon is going to be the right fit once you're actually in it.

The key is to know your exit conditions before you start. If you join a team and within the first few hours it's clear that the working styles are incompatible or the project direction is somewhere you can't contribute, it's better to have that conversation early and clearly than to disappear. The community is small enough that how you exit matters almost as much as how you showed up. A clean, honest conversation about fit is forgettable. Ghosting is not.

The AI community has a long memory for people who are consistently unreliable, and a surprisingly long memory for people who were consistently decent. That’s why first impressions matter so much—especially at your first event.

So let’s make this concrete for someone who’s sitting on the fence about attending their first event.

Because the wrong hackathon is worse than no hackathon. It poisons the well for the ones that would actually be worth your time.

The checklist I'd run through: look at the judging panel before anything else. If the judges are primarily investors with no technical background in the problem domain, the event is optimizing for pitchability, not for building. If the judges include practitioners, researchers, or people who've actually shipped products in the relevant space, the evaluation criteria will reward substance.

Prize structure as a secondary signal, which we touched on earlier. Concentrated top prizes mean the event is designed for a handful of winners. Distributed rewards across multiple tracks mean they're trying to surface a broader range of good work.

The Discord, or whatever the community channel is, check it before registration closes. The density and quality of pre-event conversation is the best leading indicator of whether the community showing up will be worth being around for forty-eight hours.

On the connection side, the single most reliable thing I'd tell someone is to write down three names before the event ends. Three people whose work or thinking impressed you. And reach out to each of them within forty-eight hours with something specific. Not a form message. Something that proves you were actually paying attention.

That asymmetry matters. Most people don't do it. Which means the bar for being memorable is surprisingly low.

Before you even register, decide what you're walking away with if you don't win. A portfolio piece, a collaborator in a specific domain, a clearer sense of your own working style under pressure. The participants who get the most out of these events are the ones who defined success before they started.

The preparation window is real. Even a few hours of reading on the announced challenge domain before the event opens puts you in a meaningfully different position than walking in cold.

And that’s the kind of edge that compounds. You show up prepared, you connect with three people afterwards, you do it again at the next event, and six months later you have a network that took other people three years to build.

The bigger question I keep coming back to is what these events look like in another few years. The tooling is changing fast enough that the forty-eight hour build window that used to require a full team of engineers is increasingly achievable by two people with strong prompting instincts and good judgment about what to build. Which changes the composition of competitive teams pretty dramatically.

It might actually democratize the format in a way that the "anyone can participate" messaging never quite managed to make real. If the technical floor drops far enough, the differentiator becomes the quality of the idea and the clarity of the problem framing. Which plays to a much wider range of people.

The career implications are real. We're already seeing hiring decisions that weigh hackathon portfolios seriously, not as a novelty but as evidence of how someone actually works under constraint, collaborates with strangers, and ships something complete. That's hard to fake and hard to demonstrate any other way.

The resume line used to be the credential. Now it's increasingly the artifact. What did you build, who saw it, what happened next.

That shift is still playing out but the direction feels pretty clear. Thanks to Hilbert Flumingtop for producing, and to Modal for keeping the infrastructure running so we can keep this show going. This has been My Weird Prompts. If you've been enjoying the episodes, a review on Spotify helps more people find us. We'll see you next time.