#interpretability

6 episodes

#2405: LLM Benchmarks Are Full of Noise: Statistical Rigor in AI Evals

Why most benchmark claims in AI are statistically indefensible — and what to do about it.

#2188: Is Emergence Real or Just Bad Metrics?

The debate over whether AI models exhibit genuine emergent abilities or just appear to because of how we measure them—and why it matters for safety...

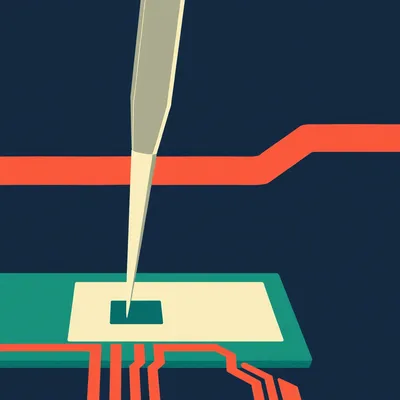

#1561: Abliteration: The High-Dimensional Lobotomy of AI

Discover how researchers are surgically removing refusal filters from AI models using a mathematical process called abliteration.

#1328: Silicon Sigils: Why We Treat AI Like an Occult Force

Is AI a tool or a digital demon? Explore why technical illiteracy is turning neural networks into a modern-day moral panic.

#1001: Why Your 1990s Credit Card Was Smarter Than ChatGPT

Think AI started with ChatGPT? Discover the "long haulers" in defense, medicine, and finance who have used machine learning for decades.

#974: Inside the Black Box: The Mystery of Emergent AI Logic

We build digital cathedrals but lack the blueprints. Explore the "black box" of AI, emergent abilities, and the mystery of double descent.