Hardware & GPUs

AMD ROCm, NVIDIA CUDA, AI accelerators, Coral TPU

2 episodes

#2911: Building a $180 Privacy-First AI Wearable

How Omi's $99 dev kit lets you build a local-first voice productivity system that watches your screen.

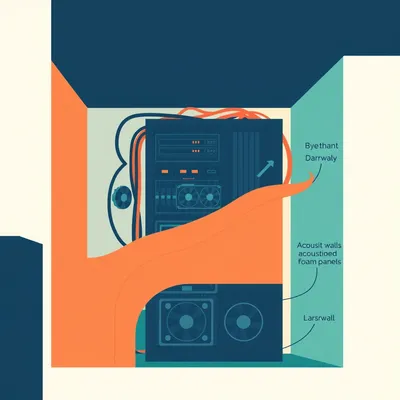

#2193: Running Claude in Your Apartment (The Physics Says No)

Building a local AI inference server to rival Claude Code sounds great until you do the math on heat, noise, and neighbor relations.