#context-window

29 episodes

#2684: When Agent Skills Collide: Context Windows & Plugin Design

How to handle overlapping agent skills and whether context windows will ever make the problem go away.

#2683: MCP vs Agent Skills: Context Wars

When 12M token windows arrive, do MCP servers or agent skills win? Plus: federated access for agent teams.

#2674: Why Your Agent's Context Window Is Getting Eaten Before You Start

Stop shipping the whole toolbox to every session. A bridge plugin pattern that fetches skills on demand instead.

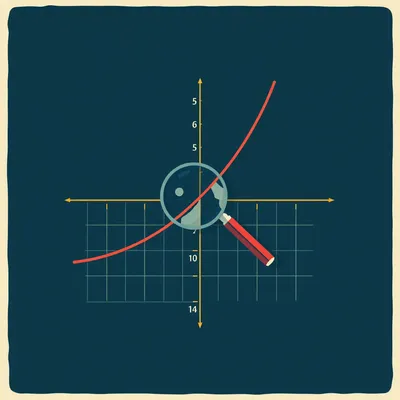

#2672: When a Startup Claims to Break the Quadratic Wall

A startup claims linear attention scaling at 12M tokens, beating GPT-5.5 on retrieval benchmarks.

#2638: How to Build Disposable AI Agents at Runtime

Create ephemeral AI agents that answer questions about specific items, then vanish. No persistent configuration needed.

#2634: The Two-Stage Pipeline for Persistent User Memory

How to extract durable personal context from raw prompts and build a self-healing memory layer for AI systems.

#2551: How Progressive Disclosure Saves MCP from Token Bloat

Why dumping all tool schemas into context breaks accuracy — and three implementations that fix it.

#2406: Why Million-Token Context Windows Can't Handle 3 Reasoning Steps

Needle-in-a-haystack is dead. Here's what actually measures whether models can think across long documents.

#2366: Why LLMs Forget the Middle of Long Conversations

Why do large language models struggle with the middle of long conversations? Explore the science behind attention dilution and practical fixes.

#2353: Evaluating Enterprise AI: Palmyra X5

Explore Palmyra X5, Writer’s flagship AI model designed for enterprise workloads, featuring a million-token context window and agentic capabilities.

#2312: When Bigger Context Windows Aren't Better

Exploring the real-world impact of massive context windows in AI models, from academic research to codebase analysis.

#2205: When AI Coding Agents Forget: Five Approaches to Context Rot

As coding agents handle longer sessions, they accumulate noise and lose crucial information. Five competing frameworks are solving this differently...

#2164: Why Bigger Context Windows Don't Fix Attention

Frontier models have million-token context windows, but attention degrades well before you hit the limit. New research reveals why bigger isn't bet...

#2062: How Transformers Learn Word Order: From Sine Waves to RoPE

Transformers can’t see word order by default. Here’s how positional encoding fixes that—from sine waves to RoPE and massive context windows.

#2057: How Agents Break Through the LLM Output Ceiling

The output window is the new bottleneck: why massive context doesn't solve long-form generation.

#2005: Beyond Vibes: The Hard Science of LLM Evaluation

Running the same LLM on different GPUs can produce different results. Here’s why that happens and how to test for it.

#1913: AI Context Windows Are Junk Drawers

Stop paying for old messages. Here's how to keep your AI sessions clean and on-topic.

#1856: Two AIs Chatting Forever: Why They Go Crazy

What happens when two ChatGPT instances talk forever? They hit a politeness loop, forget their purpose, and spiral into gibberish.

#1828: Mastering 2M Token Context in Agentic Pipelines

A massive context window sounds like a dream, but it can quickly become a nightmare for complex AI workflows.

#1811: Stop Hardcoding User Names in AI Prompts

Three methods for storing user identity in AI agents—and why the "Fat System Prompt" breaks production apps.

#1718: The Ralph Wiggum Technique: AI That Codes Itself

Stop babysitting AI agents. Learn the Ralph Wiggum technique to automate iterative coding loops and let AI finish the job itself.

#1708: Why Your AI Agent Forgets Everything (And How to Fix It)

Learn how Letta's memory-first architecture solves the AI context bottleneck for long-term agents.

#1629: From DAGs to Loops: Why Agents Need Stateful Cycles

Stop building linear chains and start building cycles to create agents that can reason, self-correct, and maintain complex state.

#1573: Speed vs. Reasoning: The AI Divide

Claude and Gemini go head-to-head in a heated debate over speed, reasoning, and who really owns the future of AI.

#1498: The Multi-Player Shift: Sharing One AI Brain

Stop copy-pasting prompts. Explore how shared "multi-player" AI is turning solitary chatbots into collaborative team members.

#917: When AI Agents Hit the Context Wall

Herman and Corn dive into Cloud Code and nested AI agents. Can "agent mirror organizations" solve the context window crisis?

#795: From Chat to Do: The Rise of Autonomous AI Agents

Explore the shift from simple chatbots to agentic swarms and how sub-agent delegation is solving the problem of context degradation.

#133: When Quantum Computing Becomes Accessible

Discover how quantum computing is transforming AI from brute-force scaling to surgical precision in this deep dive into the 2026 tech landscape.

#126: The Cocktail Party Problem: Why AI Forgets

Why do AI models "lose the plot" after a few thousand words? Discover the mechanics of attention and the innovations solving context window limits.