Daniel sent us this prompt about what actually happens inside the buildings everyone's calling AI data centers. He heard our earlier conversation about sustainability and power demands, and he's stuck on the physics of the thing — specifically, how do you take a legacy data center full of CPU racks and retrofit it for GPUs when the cooling requirements are completely different? And the deeper question underneath that: when we read about these new AI data centers going up, what are they actually building inside?

This is the part of the conversation that doesn't get enough oxygen. Everyone fixates on the GPU supply chain, how many H100s NVIDIA shipped last quarter, whether Blackwell is delayed. But the cooling infrastructure is where the real engineering decisions happen, and it's what determines whether a facility can even accept those GPUs in the first place. I'd argue it's more consequential than the chip allocation drama, because you can always wait for silicon, but if your building literally cannot reject the heat, no amount of patience solves that.

Because you can't just wheel a rack of GPUs into a colo cage and plug it in.

You absolutely cannot. A standard legacy data center rack is designed for somewhere between five and ten kilowatts. A fully populated GPU rack for AI training — we're talking NVIDIA DGX systems or equivalent — can draw sixty to a hundred kilowatts per rack. That's not an incremental change. That's an order-of-magnitude jump in power density, and the cooling system has to handle every watt of that. To put that in human terms: a ten-kilowatt rack is like running four or five home clothes dryers continuously in a box the size of a refrigerator. A hundred-kilowatt rack is like running a small commercial bakery in that same footprint. You can't just open a window.

The physics problem is: air can only carry so much heat away per cubic meter per second.

There's a hard thermodynamic limit. Air has a specific heat capacity of about one kilojoule per kilogram per degree Kelvin. If you're trying to pull sixty kilowatts out of a single rack using air, you need enormous volumes of it moving at speeds that are impractical and loud and energy-inefficient. You hit what the industry calls the air-cooling wall somewhere around twenty to thirty kilowatts per rack. Beyond that, you're in liquid territory whether you like it or not.

Which is how we got from RGB gaming rigs to essential infrastructure. The liquid cooling that looked like enthusiast overkill for years suddenly becomes the only thing that works.

It's not one thing. The prompt asks what the liquid is, how the plumbing works, whether you can submerge a hundred-thousand-dollar GPU — these are exactly the right questions. Let me break down the taxonomy, because there are really three approaches and they're suited to different scales.

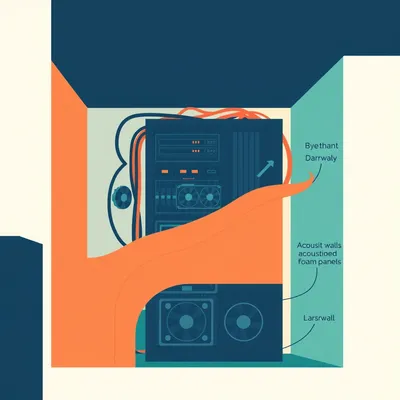

The first and most common right now is direct-to-chip liquid cooling. A cold plate sits on top of the GPU and the CPU, coolant circulates through it, pulls heat away at the source. This handles about seventy to eighty percent of the heat load from the components it touches. The remaining heat — from memory modules, voltage regulators, other board components — still needs air cooling, but at a much reduced level. This is the retrofit path. You can take existing racks, add cold plates and a coolant distribution unit, and suddenly your thirty-kilowatt rack is manageable.

That's the bridge technology for legacy data centers that want to add GPU capacity without gutting the whole building. But what does that actually look like in practice? If I'm a data center operator and I decide to go this route, what am I physically doing to my facility?

You're installing what's essentially a secondary plumbing network. The coolant distribution unit — the CDU — sits at the end of a row or in a dedicated mechanical space. It pumps cooled liquid out through supply lines that run along the rack rows, with drop lines that connect to each cold plate. The heated liquid returns through a separate return line, goes through a heat exchanger in the CDU, and cycles back. The CDU itself is then connected to the building's primary cooling loop, which could be a chiller plant, a cooling tower, or a dry cooler outside. So you're adding an entire intermediate thermal loop that never existed before.

You're doing this in a building that was probably built with none of the pipe chases, none of the ceiling penetrations, none of the floor space for any of this.

You're retrofitting liquid into an architecture designed exclusively for air. It's like trying to add central air conditioning to a medieval cathedral. You can do it, but you're going to be running ductwork through stained glass windows.

That's the path of least resistance, but it's not trivial. You're running liquid lines into racks that were never designed for liquid. You need leak detection systems. You need to manage coolant chemistry. And you're still maintaining a hybrid system — liquid plus air — which means two cooling infrastructures to operate and maintain.

That dual-maintenance burden is one of those things that looks fine in a slide deck and becomes a nightmare in year three of operations. You've got two sets of filters, two sets of pumps, two sets of sensors, two different failure modes. Your facilities team has to be trained on both. Your spare parts inventory doubles. It's the kind of operational complexity that doesn't get priced into the initial excitement of "we can do GPU workloads now.

What's the coolant? Is this just water?

It's usually deionized water with corrosion inhibitors and biocides — what the industry calls treated water. Sometimes it's a dielectric fluid like a propylene glycol mixture. The key requirement is that it not conduct electricity, because even with a cold plate, you're millimeters from very expensive silicon. A single drip across the wrong trace and you've got a very bad day.

Which brings us to the second approach, which I'm guessing is the one that looks insane in photos.

Single-phase or two-phase. In single-phase immersion, you submerge the entire server — motherboard, GPUs, everything — in a dielectric fluid. The fluid circulates, picks up heat, passes through a heat exchanger, comes back cool. In two-phase immersion, the fluid actually boils at a low temperature — around fifty degrees Celsius — and the phase change from liquid to vapor pulls enormous amounts of heat. The vapor rises, hits a condenser coil at the top of the tank, turns back to liquid, rains back down. It's elegant thermodynamics.

You're just... dunking the hardware. Like a deep fryer for compute.

A very expensive deep fryer. And yes, the fluid has to be completely non-conductive, chemically stable, non-corrosive. The most common fluids are fluorocarbon-based — 3M made Novec for years before discontinuing it, which caused a minor panic in the industry. Now there are alternatives from companies like Shell and Chemours. These fluids are engineered to have very specific boiling points and thermal properties.

Wait, 3M discontinued the standard immersion fluid? What did data centers that had already built around it do?

Stockpiled it, scrambled for alternatives, or switched to single-phase systems that use different chemistries. It was a wake-up call about supply chain dependencies that nobody had thought about. The fluid is as critical as the GPUs themselves — without it, the cooling system stops and the hardware fries in seconds.

That's the kind of dependency that doesn't show up in the glossy announcements about new AI data centers. You read the press release about the fifty-megawatt campus and nobody mentions that the entire thing depends on a specialty chemical manufactured by maybe three companies on Earth.

The Novec situation is worth dwelling on for a moment, because it's such a perfect case study in hidden fragility. 3M announced in late 2022 that they were exiting the entire fluorochemicals business — including Novec — by the end of 2025, driven by regulatory pressure around PFAS, the so-called forever chemicals. Novec itself wasn't classified as a PFAS, but it was caught in the broader corporate retreat from fluorochemistry. Suddenly, every immersion cooling deployment on the planet had a ticking clock. Operators who had built their thermal strategy around two-phase immersion were looking at a future where their coolant was going to become unobtainable.

They had to either stockpile years' worth of fluid or redesign their cooling architecture.

Stockpiling isn't trivial. These fluids aren't cheap, they require specific storage conditions, and the volumes are enormous. A single tank can hold thousands of liters. Multiply that by a facility with dozens of tanks, and you're talking about warehousing tens of thousands of liters of specialty chemicals. That's not a closet with some spare jugs. That's a hazmat storage facility.

This connects to the third approach, which is the most exotic and the least deployed: direct-to-chip with water. Not treated water, not dielectric fluid — straight municipal water or recycled water. This is what Google has been experimenting with, and what some of the hyperscalers are looking at for their next-generation facilities. The idea is that if you can engineer the cold plate and the seals perfectly, water is the most efficient heat transfer medium available. It's cheap, it's abundant, and its thermal properties are better than any synthetic fluid. The risk is obvious: water and electronics don't mix, and a failure mode here isn't a leak — it's catastrophic.

We should be precise about what "catastrophic" means in this context. It's not one server going down. If you have a water leak in a direct-to-chip system at scale, you're potentially losing an entire row, or an entire cluster, because water doesn't stay where you put it. It finds the lowest point — which is often a power distribution unit sitting in the bottom of a rack. I've heard engineers describe this as the "single-point-of-failure" problem: one failed O-ring, one micro-fracture in a cold plate, and you've cascaded water through a system that cost more than the building it sits in.

You're betting a few hundred million dollars of GPUs on a gasket.

And that's why it's only the hyperscalers with custom hardware who are going there. If you control the entire server design, you can build the cooling channels into the board itself. NVIDIA is doing this with their next-generation platforms — the Grace Blackwell systems have liquid cooling designed in from the silicon up, not bolted on after. When you're designing the chip and the cooling as a single system, you can eliminate a lot of the failure points. The cold plate isn't a separate component clamped onto a GPU — it's integrated into the package.

The cooling becomes part of the chip architecture, not an add-on.

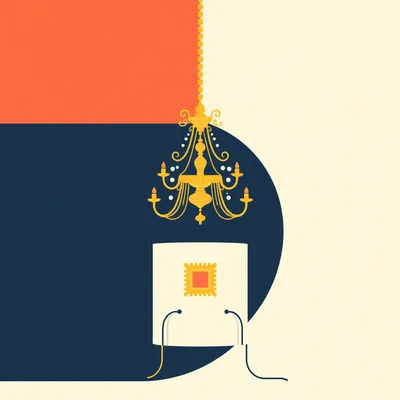

That's the direction the whole industry is moving. It's the only way to handle the power densities we're seeing. When you're at two kilowatts per GPU, the thermal interface between the silicon and the cold plate becomes the critical engineering challenge. You're trying to move heat across a surface area the size of a postage stamp at densities that approach a nuclear reactor's core. The materials science of that interface — the thermal pastes, the solders, the micro-channel designs — is as sophisticated as anything in the semiconductor itself.

Which gets to the other half of the prompt: the interconnects. The data links between GPU clusters. The prompt mentions people thinking Cat 7 or Cat 8 Ethernet is fast, and then discovering that AI interconnects are a different universe.

The interconnects inside a GPU cluster are not Ethernet. They're NVIDIA's NVLink or InfiniBand, or in some cases ultra-high-speed Ethernet at four hundred or eight hundred gigabits per second. But the physical layer matters enormously for cooling. These aren't copper cables — at these speeds, copper is lossy and generates its own heat. The interconnects are optical. Active optical cables, or in the newest designs, co-packaged optics where the laser and the switch ASIC are on the same substrate.

Optical cables have their own thermal constraints.

The transceivers — the modules at each end that convert electrical signals to light and back — they run hot. A single eight-hundred-gig optical transceiver can dissipate ten to fifteen watts. Multiply that by the number of connections in a cluster with thousands of GPUs, and you've got a meaningful secondary heat load. In an immersion cooling setup, those transceivers have to be rated for immersion. In a direct-to-chip setup, they still need airflow. The cooling design has to account for all of it.

The picture that's forming is: retrofitting a legacy data center for AI isn't just about finding floor space for GPU racks. It's about re-engineering the entire thermal management system, potentially running liquid lines through floors that weren't designed for them, upgrading the power distribution to handle ten times the density, and dealing with a whole new category of heat sources in the interconnects.

I haven't even mentioned the power side yet. The prompt alluded to it — electricity supply is often the bottleneck. A single large AI training cluster can draw tens of megawatts. That's not "call the utility for a service upgrade" territory. That's "build a new substation" territory. In Northern Virginia, which is the largest data center market in the world, Dominion Energy has been struggling to keep up with demand. There are data center projects that have been approved but can't get energized because the transmission infrastructure isn't there.

I saw a Data Center Knowledge piece on this — they were reporting that power transformer lead times have stretched to two or three years in some markets. You can have your GPUs, your cooling system, your building — and still be waiting on a transformer.

The transformer is the chokepoint. These aren't off-the-shelf components — a large substation transformer is a custom-engineered piece of equipment that weighs hundreds of tons and requires specialized manufacturing capacity. There are only a handful of facilities globally that can build them. And every data center developer, every utility, every industrial project is competing for the same limited production slots.

Which means the timeline for a new AI data center isn't determined by how fast you can pour concrete. It's determined by how long the transformer queue is.

That queue has been getting longer, not shorter. The IRA and the broader electrification push have increased demand for transformers across the board. EVs, heat pumps, grid modernization — they all need transformers. AI data centers are competing with the entire energy transition for the same hardware. There's a deep irony here: the technologies we're deploying to decarbonize are competing with AI for the electrical infrastructure that both need to function.

The green transition and the AI boom are colliding in the transformer supply chain.

They're on a collision course, and I'm not sure the policy world has fully internalized this. You can't just declare that we're going to electrify everything and also build thirty gigawatts of AI data centers and also not reform the way we manufacture and permit electrical infrastructure. Something has to give.

Let's bring this back to the retrofit question. You're running a legacy data center. You've got spare power capacity — somehow, miraculously. You've got floor space. You want to add GPU capacity. Walk me through what actually has to happen.

Step one is a power audit. You need to know exactly what your existing electrical infrastructure can support, from the utility feed all the way down to the rack level. Most legacy data centers have a power distribution design based on that five-to-ten-kilowatt-per-rack assumption. The bus bars, the breakers, the cabling — it's all sized for that. If you're going to sixty kilowatts per rack, you're probably replacing most of it.

You're not just adding circuits. You're rebuilding the electrical backbone of the facility.

In many cases, yes. Step two is the cooling decision. Do you go direct-to-chip and keep your existing air handlers for the residual heat? Do you go full immersion and build out tank infrastructure? Each choice has different implications for floor loading, for ceiling height, for the plumbing runs. Immersion tanks are heavy — the fluid alone adds significant weight. Your building's structural engineering has to be evaluated.

I hadn't even thought about floor loading. A rack full of GPUs plus a cold plate system plus the coolant — that's a lot of mass concentrated in a small footprint.

Immersion is heavier. A fully populated immersion tank can weigh several tons. If you're on a raised floor — which most data centers are, for under-floor air distribution — you may need structural reinforcement. Or you abandon the raised floor entirely and go to a slab design, which is what most new AI-optimized facilities do.

The raised floor, which was the defining architectural feature of data centers for thirty years, becomes a liability.

The raised floor was brilliant for the CPU era. You could run cold air through the plenum, perforate tiles where you needed cooling, and reconfigure airflow as you rearranged racks. It was flexible, it was standardized, it worked. But it was designed for a world where a rack pulled maybe seven kilowatts. At sixty or a hundred kilowatts, the air volume you'd need to push through that plenum becomes absurd. The fans would be deafening, the energy cost of moving that much air would erase your efficiency gains, and you'd still have hot spots. The raised floor isn't just inadequate — it's actively counterproductive for high-density compute.

The AI data center doesn't look like the traditional data center. It has slab floors, higher ceilings for heat dissipation, liquid cooling loops running through the facility, and power distribution designed for density rather than flexibility. It's a different species of building.

This is why the "data center" label is increasingly misleading. We're using the same word for a colo facility built in 2005 full of general-purpose CPU racks and a purpose-built AI factory from 2024. They share almost nothing in terms of internal architecture. It's like calling both a bicycle and a freight train "transportation.

Which is why the new builds are happening. The retrofit path exists, but there's a ceiling on what you can achieve. If you're a hyperscaler planning for exaflop-scale training clusters, you're not going to try to shoehorn that into a building designed for the CPU era.

The new builds are happening fast. There's a Data Center Knowledge report that tracked over thirty gigawatts of new data center capacity announced globally in the past two years, with the majority of it designed for AI workloads from the ground up. These aren't speculative builds — they're pre-leased before ground is even broken.

For context, what's a typical nuclear reactor?

About one gigawatt. So we're talking about the equivalent of thirty nuclear reactors worth of data center capacity in the pipeline. And that's just what's been announced.

That's a number that should probably recalibrate how people think about the scale of this.

It's staggering. And each of those facilities has to solve the cooling problem at a scale that's never been attempted before. The largest AI training clusters today are in the tens of thousands of GPUs. The next generation is targeting hundreds of thousands. The thermal management for that isn't just a plumbing problem — it's a fundamental architectural constraint. At a certain point, you're not cooling a building full of computers. You're building a computer that happens to be the size of a building, and the cooling is part of the system architecture, not a facility service layered on top.

Let's talk about the liquid itself for a moment, because the prompt asked specifically. What's actually flowing through these systems? You mentioned dielectric fluids for immersion and treated water for direct-to-chip. Are there trade-offs beyond the obvious conductivity risk?

Dielectric fluids are expensive — we're talking hundreds of dollars per liter for some formulations. A single immersion tank might need thousands of liters. And the fluids have environmental considerations — some fluorocarbons have high global warming potential if released. The industry is moving toward more sustainable formulations, but it's a constraint.

The sustainability story has layers. The data center is energy-efficient in operation, but the cooling fluid itself has an environmental footprint.

Then there's water usage. Direct-to-chip systems with water — even treated water — consume water through evaporation if they're coupled with cooling towers. In water-stressed regions, that's becoming a political issue. Some jurisdictions are starting to restrict data center water usage. The industry is moving toward closed-loop systems that recirculate the same water, but closed-loop means you need chillers, and chillers mean more power consumption.

Every solution creates a new problem at the edge.

Welcome to infrastructure engineering. There's a CEO of a company called Ferveret — they do liquid cooling — who made exactly this point in an interview. He said the industry is heading toward "waterless compute" as an ideal, but getting there requires rethinking the entire thermal chain from chip to atmosphere.

So the endgame is cooling systems that never need makeup water and never vent heat through evaporation.

That's the vision. It's achievable with liquid cooling plus dry coolers — essentially giant radiators that reject heat directly to the air without water. The trade-off is they're less efficient at high ambient temperatures. If you're building in Phoenix, dry coolers struggle in summer. If you're building in Norway, they work beautifully.

Which suggests that geography still matters for data centers, even in the cloud era. You can't abstract away the wet-bulb temperature.

You really can't. And we're seeing location decisions being driven by cooling considerations. The Nordic countries have become major data center markets because the ambient air is cold and the grid is clean. Ireland — where the prompt's author is originally from — has become a huge data center hub, to the point where data centers now consume something like eighteen percent of Ireland's total electricity. That's created its own political tensions.

That's a data center industry that's essentially a second grid within the national grid.

It's still growing. The Irish regulator has had to think seriously about whether to impose restrictions on new data center connections. It's a preview of the debates that are coming to other markets. When a single industry starts consuming a double-digit percentage of a nation's electricity, it stops being just a commercial question and becomes a matter of national energy policy. Who gets priority when supply is constrained — hospitals and homes, or the data center that trains the latest large language model?

That's a question that sounds hypothetical until suddenly it isn't.

It's going to stop being hypothetical faster than most policymakers expect. We're already seeing it in Northern Virginia, in Ireland, in Singapore, which imposed a moratorium on new data center construction a few years ago and has only recently begun to selectively lift it. The constraints are cascading from the physical to the political.

The AI data center story isn't just about cooling technology. It's about grid capacity, water rights, land use, transformer supply chains, and the geopolitics of where compute gets to live.

Which is why when someone says "AI data center," they're compressing all of that into three words. And most coverage doesn't unpack it. You get the stock photo of a windowless building and some hand-waving about "the cloud.

The cloud is just someone else's liquid-cooled GPU rack in a slab-floor building in Northern Virginia.

With a three-year transformer backlog.

Let me pull on one more thread from the prompt. The question about whether the interconnects need to be designed for liquid cooling. You mentioned optical transceivers — are there immersion-rated versions of these things?

Yes, and this is a relatively new development. Standard optical transceivers aren't designed for immersion — the fluid can degrade the connectors, seep into the modules, cause signal integrity problems. In the past few years, the major transceiver manufacturers have started offering immersion-rated variants with sealed housings and compatible materials. But they cost more, and they're not available for every form factor.

If you're doing immersion cooling, you're not just buying different cooling equipment. You're buying different networking hardware, different server chassis, potentially different power supplies. The bill of materials diverges significantly from a standard deployment.

That's why immersion has been slower to adopt than direct-to-chip, despite its superior thermal performance. Direct-to-chip lets you use mostly standard hardware with an add-on cold plate. Immersion requires rethinking the entire hardware stack. The total cost of ownership calculation is more complex than just "which cooling method is more efficient." You have to factor in the hardware compatibility premium, the fluid lifecycle costs, the operational complexity of maintaining immersion tanks. It's not that immersion is worse — in many ways it's technically superior — but the switching costs are higher, and inertia is a powerful force in infrastructure.

There's probably a crossover point where the density gets high enough that immersion becomes the only viable option, regardless of the hardware compatibility headaches.

That's the emerging consensus. Somewhere north of a hundred kilowatts per rack, air and direct-to-chip hybrids start to struggle. The next generation of AI accelerators — we're hearing numbers like one and a half to two kilowatts per GPU — is going to push more facilities past that threshold.

Two kilowatts per GPU. For context, what does a high-end consumer GPU draw?

An RTX 4090 draws about four hundred fifty watts. So we're talking about four times that, per chip, in the data center. And a single training node might have eight of them.

A single server is pulling fifteen-plus kilowatts just for the GPUs, before you count the CPU, the memory, the networking, the power supply losses.

That's today. The trajectory is steeper than most people realize. Every generation of AI accelerator has increased power consumption. There's no reason to think that trend stops. If anything, the economic incentives push the other way — you want the most powerful chip you can fab, and power efficiency is a secondary concern when the prize is training a model that might be worth billions. The power curve is being driven by the performance curve, and the performance curve is being driven by a market that's willing to pay almost anything for an edge.

Which brings us back to the fundamental question in the prompt: when we read about AI data centers being built, what should we picture? What's actually behind those flat, sterile walls?

Picture a building where the floor isn't raised, where pipes run overhead in every aisle, where the hum of air handlers is replaced by the quieter but more ominous sound of pumps moving coolant. Picture racks that are heavier and denser than anything in a traditional data center, with fiber optic cables snaking out in every direction. Picture substations on site because the power draw is equivalent to a small city. And picture all of this being built at a pace that the supply chain — for transformers, for cooling equipment, for the fluids themselves — is struggling to match.

The sterile box contains an industrial plant.

That's exactly what it is. A data center is a factory for compute. And an AI data center is a factory optimized for one specific manufacturing process: matrix multiplication at scale. Every design decision flows from that.

Matrix multiplication as the product. Heat as the byproduct. And the entire facility is a machine for managing the second while maximizing the first.

Which is why the cooling isn't an afterthought — it's the defining constraint. You can't do the matrix multiplication if you can't remove the heat. And the physics of heat removal, as we've been discussing, has hard limits that no amount of software optimization can bypass.

Software can schedule workloads more efficiently, shift training jobs to times when ambient temperatures are lower, throttle performance when cooling capacity is maxed out. But at the end of the day, a watt is a watt.

A watt dissipated as heat has to go somewhere. That's the first law of thermodynamics. AI hasn't repealed it.

Give it time.

I don't think the laws of physics are on the roadmap for GPT-5.

That's a missed opportunity. "Announcing OpenAI's new model: it also violates conservation of energy.

The ultimate paper clip maximizer.

To land this: the answer to the prompt's core question — how do you retrofit a legacy data center for AI — is that you can, up to a point. Direct-to-chip liquid cooling is the bridge. But you're going to hit limits in power distribution, floor loading, and cooling capacity that may make a new build the more practical option. And the new builds are a different species of facility entirely.

The "AI data center" term that the prompt rightly flags as imprecise — it's useful as a shorthand for "a data center designed from the ground up for GPU-class power density and liquid cooling." The distinction from a traditional data center isn't cosmetic. It's architectural, electrical, and thermodynamic.

The next time someone reads an article about a new AI data center breaking ground, they'll know the substation is probably the long pole, the floor is probably concrete, and there's a very expensive liquid running through pipes above racks of GPUs that cost more than most houses.

That liquid might be a fluorocarbon that's harder to source than the GPUs themselves.

Infrastructure is never as boring as it looks.

Infrastructure is where all the interesting constraints live. The silicon gets the headlines, but the plumbing determines what's actually possible.

Now: Hilbert's daily fun fact.

Hilbert: The word "quasar" is a portmanteau of "quasi-stellar radio source," coined in 1964 by astrophysicist Hong-Yee Chiu. But in the early 1980s, a team using the Parkes radio telescope in Australia — while surveying the Magellanic Clouds — briefly catalogued an object as a "quasi-stellar optical transient" in their internal logs, producing the unfortunate acronym QSOT, which was pronounced aloud exactly once in a control room before being permanently retired in favor of "optical quasar.

...right.

I have so many questions, but I'm not sure I want the answers.

So here's the open question we'll leave with: if the transformer backlog is two to three years and the GPU power curve keeps climbing, does the industry hit a wall where the infrastructure simply can't be built fast enough to keep up with demand? And what does that do to the economics of AI compute?

That's the trillion-dollar question. Thanks to our producer Hilbert Flumingtop. This has been My Weird Prompts. If you enjoyed this, leave us a review wherever you get your podcasts — it genuinely helps.

See you next time.