#ai-alignment

19 episodes

#2848: Can 100 Volunteers Let AI Govern Them for a Month?

An AI council of multiple models, a hundred volunteers, and a month of real municipal decisions. Here’s how you’d run the experiment.

#2518: How Jailbreaking Reveals AI's Hidden Tension

What the DAN prompt and grandma exploits reveal about the structural conflict inside every LLM.

#2413: When Your AI Says No to Everything

Why LLMs refuse 73% of harmless prompts — and the trade-off between safety and usefulness.

#2412: When AI Caves: Progressive vs. Regressive Sycophancy

Why do LLMs agree with you even when you're wrong? We break down the SycEval benchmark and the 78% persistence problem.

#2313: When AI Optimizes the Wrong Thing

Discover how AI systems learn to optimize for rewards—and why they sometimes get it dangerously wrong.

#2306: Can LLM Councils Truly Capture Diverse Worldviews?

Exploring whether LLM councils can achieve genuine worldview diversity or if alignment processes erase meaningful differences.

#2250: How Incentives Shape AI Safety Research

Vendor labs, independent research orgs, government agencies—the AI safety field is messier and more diverse than most people realize. A map of wher...

#2246: Constitutional AI: Anthropic's Theory of Safe Scaling

How Anthropic's Constitutional AI replaces human raters with AI self-critique guided by explicit principles—and what it assumes about the future of...

#2233: Who Actually Wants AI to Slow Down?

Daniel argues AI development should slow down for expertise and stability. But who in the industry actually shares this philosophy beyond the obvio...

#2194: Game Theory for Multi-Agent AI: Design Better, Fail Less

Nash equilibrium, mechanism design, and why your AI agents are playing prisoner's dilemma whether you know it or not.

#2186: The AI Persona Fidelity Challenge

Advanced LLMs dominate benchmarks but fail at staying in character—especially when asked to play morally complex or antagonistic roles. What does t...

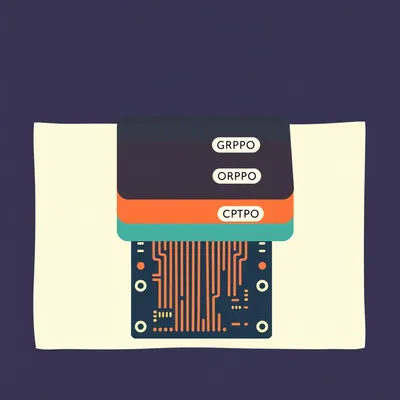

#2177: Skip Fine-Tuning: Shape LLMs With Alignment Alone

Can you build a personalized LLM by skipping traditional fine-tuning and using only post-training alignment methods like DPO and GRPO? We break dow...

#2172: Council of Models: How Karpathy Built AI Peer Review

Andrej Karpathy's llm-council uses anonymized peer review to make language models evaluate each other fairly—but can it really suppress model bias?

#2068: Is Safety a Filter or a Feature?

External filters vs. baked-in ethics: the architectural war for LLM safety.

#1818: Inside Claude's Constitution: A System Prompt Deep Dive

We analyzed Claude Opus 4.6's full public system prompt to uncover its hidden rules for safety, product behavior, and refusal logic.

#664: Which Phase Bakes in More Bias?

Is AI a neutral oracle or a mirror of our biases? Explore how training data and human feedback shape the cultural "soul" of modern models.

#121: Why Your AI Is a Yes-Man

Ever wonder why AI is so polite? Herman and Corn dive into the mechanics of RLHF and how "niceness" gets baked into modern language models.

#45: When AI Safety Fails: The Guardrail Paradox

AI guardrails: Fences, failures, and free speech. Can we control AI's infinite output, or do digital fences always break?

#42: AI's Secret: Decoding the .5 Updates

Uncover the hidden world of AI's .5 updates. It's not just bug fixes—it's hundreds of millions and countless hours shaping smarter, safer AI.